一、基本环境配置

| Hostname | IP | 说明 |

| ---------- | ---------- | ----------- |

| k8s-master | 10.0.54.21 | k8s-master0 |

| k8s-node01 | 10.0.54.25 | k8s-node01 |

| k8s-node02 | 10.0.54.26 | k8s-node02 |

根据规划修改master静态ip

2.配置静态ip

vim /etc/sysconfig/network-scripts/ifcfg-ens33

TYPE="Ethernet"

PROXY_METHOD="none"

BROWSER_ONLY="no"

BOOTPROTO="static"

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="ens33"

DEVICE="ens33"

ONBOOT="yes"

IPADDR=10.0.54.21

NETMASK=255.255.255.0

GATEWAY=10.0.54.2

DNS1="8.8.8.8"

systemctl restart network

ping www.baidu.com

3.修改主机名

按规划配置主机名

hostnamectl set-hostname k8s-master0 && bash

4.修改host解析

根据规划主机名和ip

[root@k8s-master0 ~]# cat >> /etc/hosts << EOF

10.0.54.21 k8s-master0

10.0.54.25 k8s-node01

10.0.54.26 k8s-node02

EOF

5. 关闭防火墙

[root@k8s-master0 ~]# systemctl stop firewalld && systemctl disable firewalld && systemctl status firewalld

6. 关闭selinux

#临时关闭

[root@k8s-master0 ~]# setenforce 0

#永久关闭

[root@k8s-master0 ~]# sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

#查看selinux的状态

[root@k8s-master0 ~]# getenforce

7. 关闭swap分区

#临时关闭

swapoff -a

#永久关闭修改/etc/fstab 文件,注释掉 SWAP 的自动挂载,

sed -i 's/.*swap.*/#&/' /etc/fstab

#验证

free -m

8. 时间同步

#设置时区

sudo timedatectl set-timezone Asia/Shanghai

#安装ntpdate

yum install ntpdate -y

ntpdate time.windows.com

备注:ntpdate源也可以设置成其它如:ntpdate cn.pool.net.org

#将系统时间写入到系统硬件当中

hwclock -w

#验证时间

9.加载内核模块

br_netfilter模块用于将桥接流量转发至iptables链,加载br_netfilter模块

modprobe br_netfilter

echo "modprobe br_netfilter" >> /etc/profile

#验证模块是否加载成功:

lsmod |grep br_netfilter

10.修改内核参数

#开启网桥模式,可将网桥的流量传递给iptables链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables=1

net.bridge.bridge-nf-call-iptables=1

net.ipv4.ip_forward=1

vm.swappiness=0

EOF

说明:

net.bridge.bridge-nf-call-ip6tables 和 net.bridge.bridge-nf-call-iptables,netfilter实际上既可以在L2层过滤,也可以在L3层过滤的。当值为 0 ,即要求iptables不对bridge的数据进行处理。当值为 1,也就意味着二层的网桥在转发包时也会被iptables的FORWARD规则所过滤,这样就会出现L3层的iptables rules去过滤L2的帧的问题。

max_map_count,文件包含限制一个进程可以拥有的VMA(虚拟内存区域)的数量。如不设置可能会报错:“max virtual memory areas vm.max_map_count [65530] is too low, increase to at least [262144]”

net.ipv4.ip_forward,出于安全考虑,Linux系统默认是禁止数据包转发的。所谓转发即当主机拥有多于一块的网卡时,其中一块收到数据包,根据数据包的目的ip地址将数据包发往本机另一块网卡,该网卡根据路由表继续发送数据包。这通常是路由器所要实现的功能。要让Linux系统具有路由转发功能,需要配置 Linux 的内核参数 net.ipv4.ip_forward = 1

swappiness,等于 0 的时候表示最大限度使用物理内存,然后才是 swap空间,swappiness=100的时候表示积极的使用swap分区,并且把内存上的数据及时的搬运到swap空间里面。

#使配置生效

sysctl -p /etc/sysctl.d/k8s.conf

11.ipvs转发

不开启ipvs将会使用iptables进行数据包转发,但是效率低,所以官网推荐需要开通ipvs.

1.安装系统依赖包,开启ipvs转发

yum install -y ipvsadm ipset

cat > /etc/sysconfig/modules/ipvs.modules << EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

#所有节点上给脚本⽂件添加权限并执⾏

chmod 755 /etc/sysconfig/modules/ipvs.modules

bash /etc/sysconfig/modules/ipvs.modules

#查看是否模块加载情况

lsmod | grep ip_vs

二、完整克隆2台node节点机

| Hostname | IP | 说明 |

| ---------- | ---------- | ----------- |

| k8s-master | 10.0.54.21 | k8s-master0 |

| k8s-node01 | 10.0.54.25 | k8s-node01 |

| k8s-node02 | 10.0.54.26 | k8s-node02 |

1.配置静态ip

根据规划修改node1和node2的静态ip

vim /etc/sysconfig/network-scripts/ifcfg-ens33

TYPE="Ethernet"

PROXY_METHOD="none"

BROWSER_ONLY="no"

BOOTPROTO="static"

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="ens33"

DEVICE="ens33"

ONBOOT="yes"

IPADDR=静态ip

NETMASK=255.255.255.0

GATEWAY=10.0.54.2

DNS1="8.8.8.8"

systemctl restart network

2.修改主机名

根据规划修改node1和node2的主机名

hostnamectl set-hostname k8s-node01 && bash

hostnamectl set-hostname k8s-node02 && bash

3.配置各主机之间的免密登录

在master节点:

ssh-keygen

for i in k8s-node01 k8s-node02;do ssh-copy-id -i $i;done

三、3台机均安装基础环境

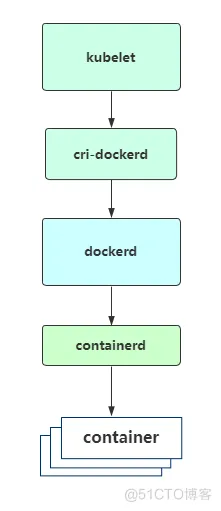

Kubernetes v1.24移除docker-shim的支持,默认使用containerd作为运行时容器,而Docker Engine默认又不支持CRI标准,因此二者默认无法再直接集成。为此,Mirantis和Docker联合创建了cri-dockerd项目,用于为Docker Engine提供一个能够支持到CRI规范的桥梁,从而能够让Docker作为Kubernetes容器引擎。

1、安装docker

# 配置yum源

cd /etc/yum.repos.d && mkdir backup && mv CentOS-Base.repo backup/

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

#添加yum docker软件源

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

#安装docker

yum -y install docker-ce

#启用并启动docker

systemctl enable docker && systemctl start docker

配置镜像下载加速器

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

#重启docker

systemctl daemon-reload && systemctl restart docker

docker info |grep "Cgroup Driver"

2、安装cri-dockerd

下载cri-dockerd安装包

wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.1/cri-dockerd-0.3.1-3.el7.x86_64.rpm

安装cri-dockerd

rpm -ivh cri-dockerd-0.3.1-3.el7.x86_64.rpm

#如果报错,直接按下列网址安装

rpm -ivh https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.1/cri-dockerd-0.3.1-3.el7.x86_64.rpm

添加运行时相关配置

vim /etc/crictl.yaml

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

修改镜像地址为国内

#修改镜像地址为国内

vim /etc/containerd/config.toml

[plugins."io.containerd.grpc.v1.cri"]

sandbox_image = "registry.k8s.io/pause:3.6"

修改成:

sandbox_image = "registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6"

[plugins."io.containerd.grpc.v1.cri".registry]

config_path = "/etc/containerd/registry"

#创建文件夹

mkdir /etc/containerd/registry/docker.io -p

#创建hosts.toml文件

//https://e0u0eb6u.mirror.aliyuncs.com将其修改成相应的加速地址

vim /etc/containerd/registry/docker.io/hosts.toml

server = "https://docker.io"

[host."https://e0u0eb6u.mirror.aliyuncs.com"]

capabilities = ["pull", "resolve"]

启动cri-dockerd

systemctl daemon-reload

systemctl enable cri-docker && systemctl start cri-docker

systemctl restart containerd

3、添加yum k8s软件源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

4、安装kubeadm、kubelet、kubectl

yum install -y kubelet-1.26.1 kubeadm-1.26.1 kubectl-1.26.1

systemctl enable kubelet

kubeadm version

四、在master节点初始化kubernetes

修改kubeadm配置文件

[root@k8s-master0 ~]# mkdir /etc/k8s

[root@k8s-master0 ~]# cd /etc/k8s

[root@k8s-master0 k8s]# wget http://192.168.142.11/k8s/k8s1.26/kubeadm-config.yaml

修改配置文件IP地址

kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: 7t2weq.bjbawausm0jaxury

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.0.54.21

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/containerd/containerd.sock

name: k8s-master0

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/control-plane

---

apiServer:

certSANs:

- 10.0.54.21

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: 10.0.54.21:6443

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: v1.26.0

networking:

dnsDomain: cluster.local

podSubnet: 172.16.10.0/24

serviceSubnet: 172.16.32.0/24

scheduler: {}

拉去镜像

[root@k8s-master0 ~]# kubeadm config images pull --config kubeadm-config.yaml

初始化集群

[root@k8s-master0 ~]# kubeadm init --config /etc/k8s/kubeadm-config.yaml --upload-certs

或使用

kubeadm init \

--apiserver-advertise-address=10.0.54.21 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.26.1 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16 \

--cri-socket=unix:///var/run/cri-dockerd.sock \

--ignore-preflight-errors=all

–apiserver-advertise-address:集群通告地址

–image-repository:由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址

–kubernetes-version:K8s版本,与上面安装的一致

–service-cidr:集群内部虚拟网络,Pod统一访问入口

–pod-network-cidr:Pod网络,与下面部署的CNI网络组件yaml中保持一致

–cri-socket:指定cri-dockerd接口,如果是containerd则使用unix:///run/containerd/containerd.sock

使用kubectl

[root@k8s-master0 ~]# mkdir -p $HOME/.kube

[root@k8s-master0 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master0 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

查看节点信息:

[root@k8s-master0 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master0 NotReady control-plane 78s v1.26.1

PS: 如果初始化master有问题,可将集群重置,再次初始化master,其他节点重新加入前也要kubeadm reset

五、node加入集群

添加新master

kubeadm join 10.0.54.21:6443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:3ea42c31b5f4021cb89b8d84cb5f137368ae9eb3a241bfd0ce489b1f8130dcb6 \

--control-plane --certificate-key da51e22edf9fa9edb9ddd95fe6779828ef1a31b42fea6e2b3228fa849d53bb41

如秘钥过期需要重新申请

[root@k8s-master0 ~]# kubeadm init phase upload-certs --upload-certs

I0512 12:56:05.293911 10703 version.go:256] remote version is much newer: v1.27.1; falling back to: stable-1.26

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

e93bda03569f1c5fa6b18d217ea7afb887390374bead247b01be3071419120f7

然后将da51e22edf9fa9edb9ddd95fe6779828ef1a31b42fea6e2b3228fa849d53bb41替换成e93bda03569f1c5fa6b18d217ea7afb887390374bead247b01be3071419120f7

添加woker节点

kubeadm join 10.0.54.21:6443 --token je04b4.qnduxtdprh74sc7g --discovery-token-ca-cert-hash sha256:3ea42c31b5f4021cb89b8d84cb5f137368ae9eb3a241bfd0ce489b1f8130dcb6 --cri-socket=unix:///run/containerd/containerd.sock

如token过期需要创建加入节点的token

[root@k8s-master0 ~]# kubeadm token create --print-join-command

kubeadm join 10.0.54.21:6443 --token je04b4.qnduxtdprh74sc7g --discovery-token-ca-cert-hash sha256:3ea42c31b5f4021cb89b8d84cb5f137368ae9eb3a241bfd0ce489b1f8130dcb6

六、master配置网络插件calico

增加解析记录

[root@k8s-master0 ~]# vim /etc/hosts

185.199.108.133 raw.githubusercontent.com

[root@k8s-master0 ~]# curl https://raw.githubusercontent.com/projectcalico/calico/v3.25.1/manifests/calico.yaml -O

[root@k8s-master0 ~]# kubectl apply -f calico.yaml

[root@k8s-master0 ~]# wget http://192.168.142.11/k8s/k8s1.26/calico.yaml

[root@k8s-master0 ~]# kubectl apply -f calico.yaml

poddisruptionbudget.policy/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

serviceaccount/calico-node created

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

deployment.apps/calico-kube-controllers created

PS:如果部署失败,则删除calico :kubectl delete -f calico.yaml

验证集群节点状态正常

[root@k8s-master0 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master0 Ready control-plane 15h v1.26.1

k8s-node01 Ready <none> 111m v1.26.1

k8s-node02 Ready <none> 15m v1.26.1

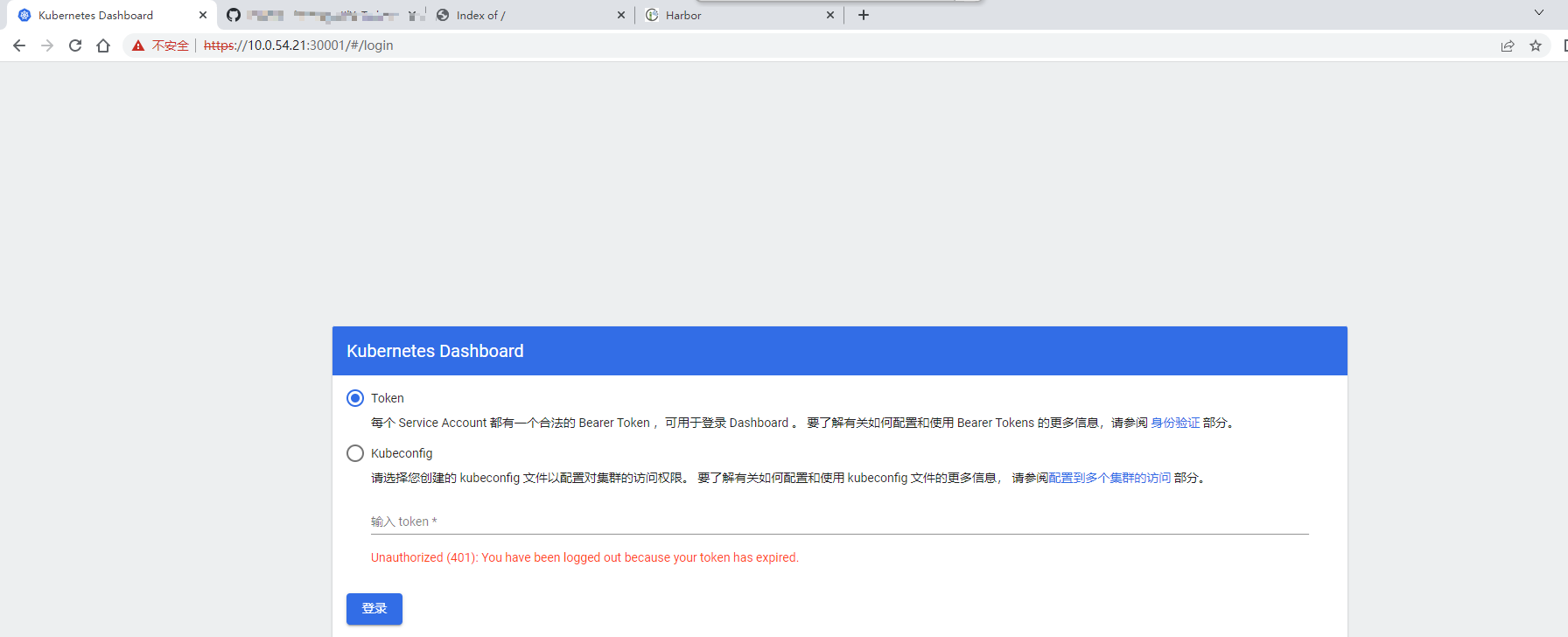

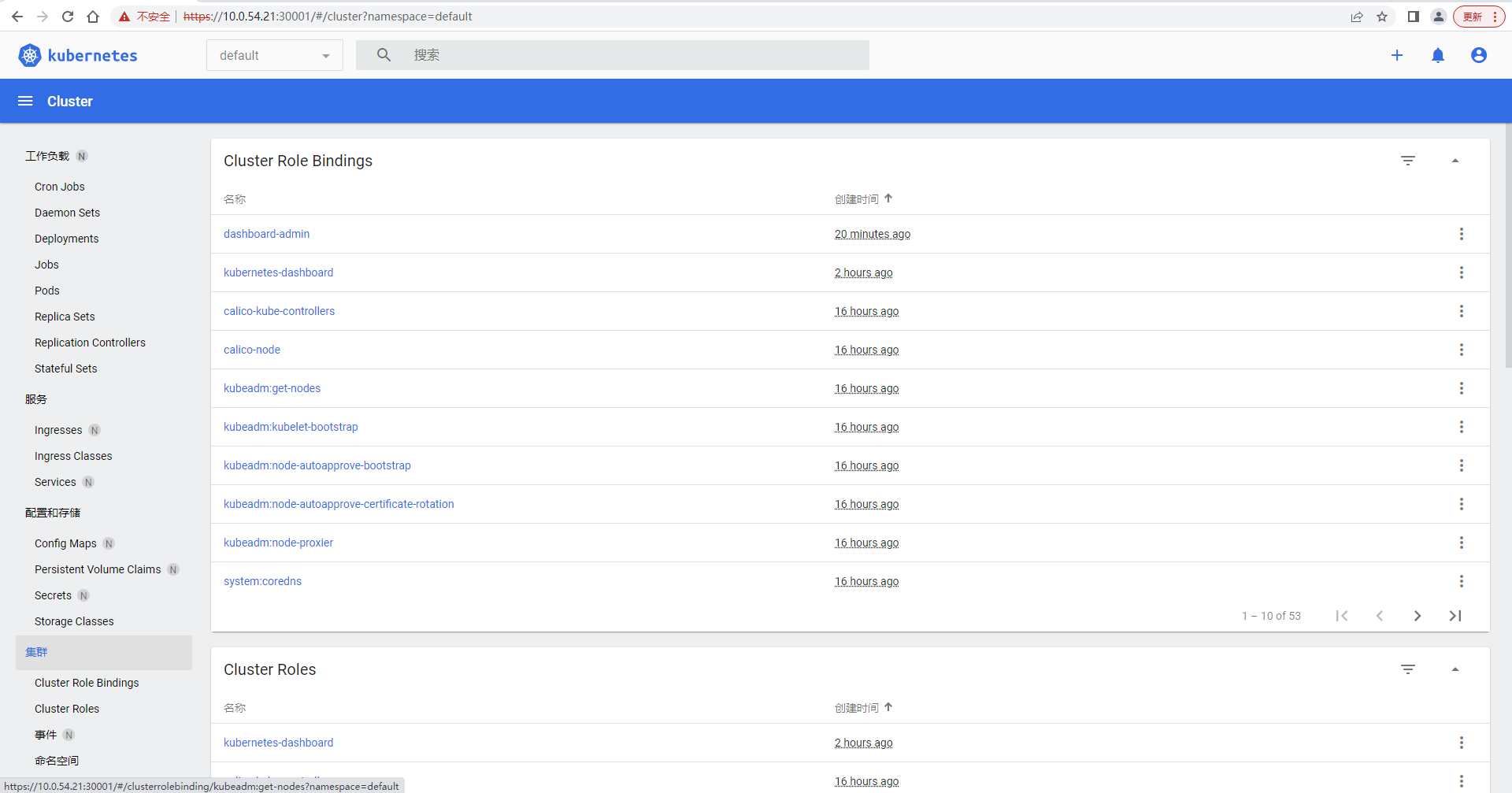

七、部署Dashboard

#创建用户

[root@k8s-master0 ~]# wget https://raw.githubusercontent.com/cby-chen/Kubernetes/main/yaml/dashboard.yaml

#创建用户

[root@k8s-master0 ~]# kubectl apply -f dashboard.yaml

[root@k8s-master0 ~]# kubectl get pods -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-7bc864c59-2p8x8 1/1 Running 1 (64m ago) 114m

kubernetes-dashboard-6c7ccbcf87-x45ts 1/1 Running 1 (63m ago) 114m

创建dashboard角色

创建service account并绑定默认cluster-admin管理员群角色

#创建用户

[root@k8s-master0 ~]# kubectl create sa dashboard-admin -n kubernetes-dashboard

#用户授权

[root@k8s-master0 ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:dashboard-admin -n kubernetes-dashboard

#获取用户Token

[root@k8s-master0 ~]# kubectl create token dashboard-admin -n kubernetes-dashboard

eyJhbGciOiJSUzI1NiIsImtpZCI6ImVJZXpjWVpsZWNiTjVpZXRrM3pVQXlBejcyMkh0UGg2X2dOWkZKQ2puZXcifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNjgzODgwNTkxLCJpYXQiOjE2ODM4NzY5OTEsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJkYXNoYm9hcmQtYWRtaW4iLCJ1aWQiOiI2Y2U1OTQzNC02Y2FhLTRkMGItODEzMy1kZTRlMzBlZTEwMmEifX0sIm5iZiI6MTY4Mzg3Njk5MSwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.gnYgNSM1IYik_0hcvxDJfi3ScP4-6oua94FPllkB7KioFke4JQaheSEpk0Gv4eu8ljfaPgyP20mNjgWjsm5EJNoxX1XnW0wQm7WX4XM2qUwFBjNP7-Qb2aR_xyJLuitb-fMLxMNrqVt8pd1QLY1-I5PaSJ5qZjhqgbkOThGORlKTCW5J6KCQe7k-kZXWgAudwkMZ8BurLGbe4XSGAUt8t1_aiZAL-mDEsfN9Cy4RtJS0RGH5PUhSZx0LbsFpAX_wX_0EW_OdvESft8SYaEoETzbpJhDpW270692Vuc4vUJAZcdRJj_JvUw6mbImb-IqXSnRvAIrvPs4nj1FnwIEn6w

查询pod状态

[root@k8s-master0 ~]# kubectl get svc -A

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default kubernetes ClusterIP 172.16.32.1 <none> 443/TCP 15h

kube-system kube-dns ClusterIP 172.16.32.10 <none> 53/UDP,53/TCP,9153/TCP 15h

kubernetes-dashboard dashboard-metrics-scraper ClusterIP 172.16.32.53 <none> 8000/TCP 115m

kubernetes-dashboard kubernetes-dashboard NodePort 172.16.32.171 <none> 443:30001/TCP 115m

浏览器访问https://master节点:30001

https://10.0.54.21:30001

输入token

文章评论