二进制安装1.22.1

环境准备

所有节点都要执行

关闭防火墙与selinux

# systemctl stop firewalld &&systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

临时关闭selinux

# setenforce 0

永久关闭selinux

# sed -i s/SELINUX=enforcing/SELINUX=disabled/g

修改主机名

# hostnamectl set-hostname <替换为主机名> && bash

关闭swap

临时关闭

[root@master ~]# swapoff -a

永久关闭

# vim /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

添加主机解析

# cat >> /etc/hosts << EOF

> 192.168.54.10 master

> 192.168.54.20 node

> EOF

将桥接的IPv4流量传递到iptables的链

# cat >> /etc/sysctl.d/k8s.conf << EOF

> net.bridge.bridge-nf-call-ip6tables = 1

> net.bridge.bridge-nf-call-iptables = 1

> EOF

生效配置

# sysctl --system

时间同步

[root@master ~]# yum install -y chrony

[root@master ~]# vim /etc/chrony.conf

#server 0.centos.pool.ntp.org iburst

#server 1.centos.pool.ntp.org iburst

#server 2.centos.pool.ntp.org iburst

#server 3.centos.pool.ntp.org iburst

server master iburst

allow 192.168.54.0/24

[root@node ~]# yum install -y chrony

[root@node ~]# vim /etc/chrony.conf

#server 0.centos.pool.ntp.org iburst

#server 1.centos.pool.ntp.org iburst

#server 2.centos.pool.ntp.org iburst

#server 3.centos.pool.ntp.org iburst

server master iburst

# chronyc

chronyc> sources

chronyc> makestep

安装常用的工具

# yum install open-vm-tools bash-completion lrzsz tree vim wget net-tools -y

部署ETCD

ETCD是一个分布式键值存储系统,Kubernetes使用Etcd进行数据存储,所以先准备一个Etcd数据库,为解决Etcd单点故障,应采用集群方式部署,这里使用3台组建集群,可容忍1台机器故障,当然,你也可以使用5台组建集群,可容忍2台机器故障。

我这里由于环境问题就只单机搭建Etcd

CFSSL

下载CFSSL工具

Github 地址: https://github.com/cloudflare/cfssl

官网地址: https://pkg.cfssl.org/

工具地址:

https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64下载并上传服务器

[root@master ~]# ll

total 370272

-rw-------. 1 root root 1646 Mar 26 2022 anaconda-ks.cfg

-rw-r--r-- 1 root root 6595195 Jan 8 11:52 cfssl-certinfo_linux-amd64

-rw-r--r-- 1 root root 2277873 Jan 8 11:52 cfssljson_linux-amd64

-rw-r--r-- 1 root root 10376657 Jan 8 11:52 cfssl_linux-amd64

安装CFSSL工具

[root@master ~]# chmod +x cfssl-certinfo_linux-amd64 cfssljson_linux-amd64 cfssl_linux-amd64

[root@master ~]# mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

[root@master ~]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@master ~]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@master ~]# ll /usr/local/bin/

total 18808

-rwxr-xr-x 1 root root 10376657 Jan 8 11:52 cfssl

-rwxr-xr-x 1 root root 6595195 Jan 8 11:52 cfssl-certinfo

-rwxr-xr-x 1 root root 2277873 Jan 8 11:52 cfssljson

准备ETCD证书

创建存放配置文件文件夹

[root@master ~]# mkdir -p ~/TLS/{etcd,k8s}

创建配置文件

[root@master ~]# cd ~/TLS/etcd/

配置证书生成策略

[root@master etcd]# vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

创建CA证书签名请求文件

[root@master etcd]# vim ca-csr.json

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

生成CA和私钥

生成CA所必需的文件ca-key.pem(私钥)和ca.pem(证书),还会生成ca.csr(证书签名请求),用于交叉签名或重新签名

[root@master etcd]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2023/01/08 14:50:41 [INFO] generating a new CA key and certificate from CSR

2023/01/08 14:50:41 [INFO] generate received request

2023/01/08 14:50:41 [INFO] received CSR

2023/01/08 14:50:41 [INFO] generating key: rsa-2048

2023/01/08 14:50:42 [INFO] encoded CSR

2023/01/08 14:50:42 [INFO] signed certificate with serial number 219158393956169562968568888222085851996787807866

[root@master etcd]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

创建域名证书签名请求文件

[root@master etcd]# vim server-csr.json

{

"CN": "etcd",

"hosts": [

"192.168.54.10",

"192.168.54.20",

"192.168.54.30"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

注:上述文件hosts字段中IP为所有etcd节点的集群内部通信IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP。

其中"192.168.54.20"和"192.168.54.30"就是为预留的ip,为了后面做集群准备

生成域名证书私钥和公钥

[root@master etcd]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

ETCD安装与配置

下载ETCD

从Github下载二进制文件

https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

将etcd包上传到服务器

[root@master ~]# ls

anaconda-ks.cfg etcd-v3.4.9-linux-amd64.tar.gz

创建etcd工作目录

[root@master ~]# mkdir /opt/etcd/{bin,cfg,ssl} -p

解压etcd压缩包

[root@master ~]# tar -zxf etcd-v3.4.9-linux-amd64.tar.gz

将二进制文件复制到etcd工作目录

[root@master ~]# cp etcd-v3.4.9-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

创建ETCD配置文件

[root@master ~]# cd /opt/etcd/cfg/

[root@master cfg]# vim etcd.conf

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.54.10:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.54.10:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.54.10:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.54.10:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.2.119:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

systemd管理etcd

[root@master cfg]# vim /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd.conf

ExecStart=/opt/etcd/bin/etcd \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \

--logger=zap

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

拷贝刚生成的证书到etcd工作目录

[root@master cfg]# cd ~/TLS/etcd/

[root@master etcd]# cp ca*.pem server*.pem /opt/etcd/ssl/

[root@master etcd]# ls /opt/etcd/ssl/

ca-key.pem ca.pem server-key.pem server.pem

启动etcd服务

[root@master etcd]# systemctl start etcd

[root@master etcd]# systemctl enable etcd

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service.

测试是否启动成功

[root@master etcd]# ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem \

> --endpoints="https://192.168.54.10:2379" endpoint health

https://192.168.54.10:2379 is healthy: successfully committed proposal: took = 5.53023ms

healthy: successfully 就是启动成功了

(环境问题,我只弄了单节点)

多个节点的话将

/opt/etcd/和/usr/lib/systemd/system/etcd.service拷贝到相应的节点上

scp -r /opt/etcd/ root@192.168.54.20:/opt/ scp /usr/lib/systemd/system/etcd.service root@192.168.54.20:/usr/lib/systemd/system/ scp -r /opt/etcd/ root@192.168.54.30:/opt/ scp /usr/lib/systemd/system/etcd.service root@192.168.54.30:/usr/lib/systemd/system/修改etcd.conf配置文件中的节点名称和当前服务器IP

[root@master etcd]#cat /opt/etcd/cfg/etcd.conf #将192.168.54.20和192.168.54.30节点加入到etcd集群中 ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.2.119:2380,etcd-2=https://192.168.54.20:2380,etcd-3=https://192.168.54.30:2380" [root@k8s-node2 ~]# cat /opt/etcd/cfg/etcd.conf #[Member] ETCD_NAME="etcd-2" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://192.168.54.20:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.54.20:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.54.20:2380" ETCD_ADVERTISE_CLIENT_URLS="https://192.168.54.20:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.2.119:2380,etcd-2=https://192.168.54.20:2380,etcd-3=https://192.168.54.30:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" [root@k8s-node2 ~]# cat /opt/etcd/cfg/etcd.conf #[Member] ETCD_NAME="etcd-3" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://192.168.54.30:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.54.30:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.54.30:2380" ETCD_ADVERTISE_CLIENT_URLS="https://192.168.54.30:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.2.119:2380,etcd-2=https://192.168.54.20:2380,etcd-3=https://192.168.54.30:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new"

部署Docker

master和node节点都要部署

配置docker阿里源

# curl -o /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

阿里centos源(根据需要配置)

# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

# yum makecache

安装依赖

# yum install -y lvm2 device-mapper-persistent-data.x86_64

安装docker

[root@master etcd]# yum install -y docker-ce

修改docker相关配置

[root@master etcd]# cat > /etc/docker/daemon.json << EOF

{

"insecure-registries": ["0.0.0.0/0"],

"registry-mirrors": ["https://e0u0eb6u.mirror.aliyuncs.com"]

}

EOF

启动并设置开机自启

[root@master etcd]# systemctl start docker && systemctl enable docker

部署Master

部署kube-apiserver

准备kube-apiserver证书

[root@master etcd]# cd ~/TLS/k8s/

创建配置文件

配置证书生成策略

[root@master k8s]# vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

创建CA证书签名请求文件

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

生成CA和私钥

[root@master k8s]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2023/01/08 23:32:27 [INFO] generating a new CA key and certificate from CSR

2023/01/08 23:32:27 [INFO] generate received request

2023/01/08 23:32:27 [INFO] received CSR

2023/01/08 23:32:27 [INFO] generating key: rsa-2048

2023/01/08 23:32:27 [INFO] encoded CSR

2023/01/08 23:32:27 [INFO] signed certificate with serial number 554658371870611561054441169277683433963118969245

使用自签CA签发kube-apiserver HTTPS证书

配置证书签名请求文件

[root@master k8s]# vim server-csr.json

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.54.10",

"192.168.54.20",

"192.168.54.30",

"192.168.54.41",

"192.168.54.42",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

注:上述文件hosts字段中IP为所有Master/LB/VIP IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP。

生成证书

[root@master k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

2023/01/08 23:39:41 [INFO] generate received request

2023/01/08 23:39:41 [INFO] received CSR

2023/01/08 23:39:41 [INFO] generating key: rsa-2048

2023/01/08 23:39:41 [INFO] encoded CSR

2023/01/08 23:39:41 [INFO] signed certificate with serial number 147291973083138082236348739397891869821010420303

2023/01/08 23:39:41 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

下载kube-apiserver二进制文件

https://dl.k8s.io/v1.22.1/kubernetes-server-linux-amd64.tar.gz

创建工作目录

[root@master ~]# mkdir /opt/kubernetes/{bin,cfg,ssl,logs} -p

解压并将二进制目录拷贝到工作目录

[root@master bin]# cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin/

[root@master bin]# cp kubectl /usr/local/bin/

创建配置文件

[root@master bin]# vim /opt/kubernetes/cfg/kube-apiserver.conf

KUBE_APISERVER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--etcd-servers=https://192.168.54.10:2379 \

--bind-address=192.168.54.10 \

--secure-port=6443 \

--advertise-address=192.168.54.10 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \

--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \

--requestheader-allowed-names=kubernetes \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

• --logtostderr:启用日志

• ---v:日志等级

• --log-dir:日志目录

• --etcd-servers:etcd集群地址

• --bind-address:监听地址

• --secure-port:https安全端口

• --advertise-address:集群通告地址

• --allow-privileged:启用授权

• --service-cluster-ip-range:Service虚拟IP地址段

• --enable-admission-plugins:准入控制模块

• --authorization-mode:认证授权,启用RBAC授权和节点自管理

• --enable-bootstrap-token-auth:启用TLS bootstrap机制

• --token-auth-file:bootstrap token文件

• --service-node-port-range:Service nodeport类型默认分配端口范围

• --kubelet-client-xxx:apiserver访问kubelet客户端证书

• --tls-xxx-file:apiserver https证书

• 1.20版本必须加的参数:--service-account-issuer,--service-account-signing-key-file

• --etcd-xxxfile:连接Etcd集群证书

• --audit-log-xxx:审计日志

• 启动聚合层相关配置:--requestheader-client-ca-file,--proxy-client-cert-file,--proxy-client-key-file,--requestheader-allowed-names,--requestheader-extra-headers-prefix,--requestheader-group-headers,--requestheader-username-headers,--enable-aggregator-routing

将刚生成的证书拷贝到工作目录

[root@master bin]# cd ~/TLS/k8s/

[root@master k8s]# cp ca*.pem server*.pem /opt/kubernetes/ssl/

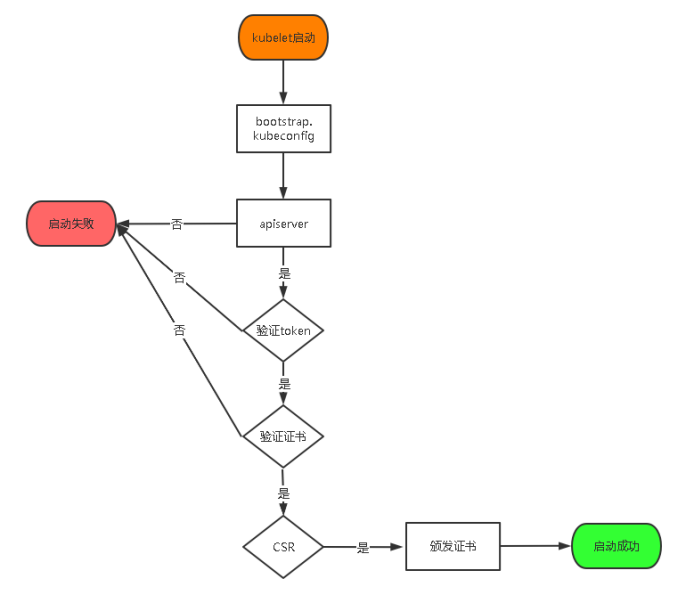

启用 TLS Bootstrapping 机制

TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和kube-proxy要与kube-apiserver进行通信,必须使用CA签发的有效证书才可以,当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy还是由我们统一颁发一个证书。

生成token

[root@master ~]# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

0a1dfb3a3df8bd803ed28c6dcf3a7354

创建配置中token文件

--token-auth-file=

/opt/kubernetes/cfg/token.csv

[root@master ~]# vim /opt/kubernetes/cfg/token.csv

#格式: token,用户名,UID,用户组

0a1dfb3a3df8bd803ed28c6dcf3a7354,kubelet-bootstrap,10001,"system:node-bootstrapper"

systemd关闭kube-apiserver

[root@master ~]# vim /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动并设置开机自启kube-apiserver

[root@master ~]# systemctl daemon-reload

[root@master ~]# systemctl start kube-apiserver

[root@master ~]# systemctl enable kube-apiserver

[root@master ~]# systemctl status kube-apiserver

● kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 11:38:46 CST; 3s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 54528 (kube-apiserver)

Tasks: 8

Memory: 235.5M

CGroup: /system.slice/kube-apiserver.service

└─54528 /opt/kubernetes/bin/kube-apiserver --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs --etcd-servers=https://192.168.54.10:2379 --bind-a...

Jan 09 11:38:46 master systemd[1]: Started Kubernetes API Server.

Jan 09 11:38:47 master kube-apiserver[54528]: E0109 11:38:47.017862 54528 instance.go:380] Could not construct pre-rendered responses for ServiceAccou... got: api

Hint: Some lines were ellipsized, use -l to show in full.

部署kube-controller-manager

创建配置文件

[root@master ~]# vim /opt/kubernetes/cfg/kube-controller-manager.conf

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect=true \

--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \

--bind-address=127.0.0.1 \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--cluster-signing-duration=87600h0m0s"

• --kubeconfig:连接apiserver配置文件

• --leader-elect:当该组件启动多个时,自动选举(HA)

• --cluster-signing-cert-file/--cluster-signing-key-file:自动为kubelet颁发证书的CA,与apiserver保持一致

生成kubeconfig文件

配置kube-controller-manager证书申请文件

[root@master k8s]# pwd

/root/TLS/k8s

[root@master k8s]# vim kube-controller-manager-csr.json

{

"CN": "kube-controller-manager",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

生成证书私钥公钥

[root@master k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

2023/01/09 15:15:32 [INFO] generate received request

2023/01/09 15:15:32 [INFO] received CSR

2023/01/09 15:15:32 [INFO] generating key: rsa-2048

2023/01/09 15:15:32 [INFO] encoded CSR

2023/01/09 15:15:32 [INFO] signed certificate with serial number 31411374513799409930916518126925814006743794261

2023/01/09 15:15:32 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master k8s]# ll

total 52

-rw-r--r-- 1 root root 1033 Jan 9 15:15 kube-controller-manager.csr

-rw-r--r-- 1 root root 305 Jan 9 15:08 kube-controller-manager-csr.json

-rw------- 1 root root 1675 Jan 9 15:15 kube-controller-manager-key.pem

-rw-r--r-- 1 root root 1424 Jan 9 15:15 kube-controller-manager.pem

生成kubeconfig文件

[root@master k8s]# KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig"

[root@master k8s]# KUBE_APISERVER="https://192.168.54.10:6443"

[root@master k8s]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

Cluster "kubernetes" set.

[root@master k8s]# kubectl config set-credentials kube-controller-manager \

--client-certificate=./kube-controller-manager.pem \

--client-key=./kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

User "kube-controller-manager" set.

[root@master k8s]# kubectl config set-context default \

--cluster=kubernetes \

--user=kube-controller-manager \

--kubeconfig=${KUBE_CONFIG}

Context "default" created.

[root@master k8s]# kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

Switched to context "default".

查看生成的配置文件

[root@master k8s]# cat /opt/kubernetes/cfg/kube-controller-manager.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUR2akNDQXFhZ0F3SUJBZ0lVWVNlOTJPenNLN0hGaFhxSjJvNWczU2I0d1owd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyczRjekVQTUEwR0ExVUVDeE1HVTNsemRHVnRNUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEl6TURFd09ERTFNamN3TUZvWERUSTRNREV3TnpFMU1qY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RERBSwpCZ05WQkFvVEEyczRjekVQTUEwR0ExVUVDeE1HVTNsemRHVnRNUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBeFNrWXJaS0NxbkxFN1RlMlFqQkUKRGt4Tk9COHJrQTFUOHdQZGc0TTVxc0lsb090bENaQlpMd1RpV0hoeklHaDhuWExBcWdwYW1samg4SGhCa0ozWAp0eENSdGxJTDNPTkRHM3plVFZ2WVNUclN6aVowc1pzQTlSaVNqSW5MLzNvRHd4TUxnSUdxQmFZUDBkTzUvb2UyCmJmWm1iM1Vka08vWE5EeFNBTWxCWHhEWlZiaTJtMXZadEpYeUliQmcvRjNqYmpsMjRHSDY2aTlwckRNM1RUV2UKK0d1MmxaaUJDcHNtUHJuOFVJNTM1N2tQTlRnVldlT0U2ak1KVDhPQUdzZmdmN01ET1FzZWR0WlVhOERha0gyYwpsWDZrL3FZYWt2enk2bXk4MjRFbU1qd01hK2lxaWNOVTkvNS9CaFlsTmE1MkR2SUU1M3lnR25TSWp5YThCeDFICnZ3SURBUUFCbzJZd1pEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0VnWURWUjBUQVFIL0JBZ3dCZ0VCL3dJQkFqQWQKQmdOVkhRNEVGZ1FVK3NMYS9ST2V6ZDQ5OXprd09xOVREN2srN2E4d0h3WURWUjBqQkJnd0ZvQVUrc0xhL1JPZQp6ZDQ5OXprd09xOVREN2srN2E4d0RRWUpLb1pJaHZjTkFRRUxCUUFEZ2dFQkFISnFIRkExelNQcnYxVG5HN3pxCkRGdGJRLzRycXZOU3lFYUNsWXRRcmtiZjhCdTBhN21vN0Q4WmlnUE1FcncrWE9HUnJETGZUQ0kvSTNOVG83bWwKQ1JmQXIwU1I0cnZYcyt4bmhYWGgxQnBCSlVMYWM4ZDhFTEtsN1pROFIyWGhtNnV6b2pWYjNLOStlL3NmZ2w2awpWLzJqdFB4aHBZZ0h6N0xDclMyd2xvYU1RWWQyZjVwRDM4TEJQVm5sTXlIYk1qbllBUHZFNGFOWndGdlR2N2R0CndTZ3pQZ1QzS0p3SXJpeDlYQmp4Qk81RkRzdFBQcFJydnY1YUs5TzR0bFVVN01pK2Q1aFJpdThzY1ptTDNLZ1AKdnU3bkJETjM0cHpFT3FuNFBScVZzNHNTMkV4VGNzbFk4NEpRRS8yTkxpT0J2eDJ1QklYU3NIdS9YWnBhaVZVKwpBeFk9Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://192.168.54.10:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kube-controller-manager

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kube-controller-manager

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQ3ekNDQXRlZ0F3SUJBZ0lVQllDSTVUczQxV0dTditxaWo5T2F5UmNEZ2xVd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyczRjekVQTUEwR0ExVUVDeE1HVTNsemRHVnRNUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEl6TURFd09UQTNNVEV3TUZvWERUTXpNREV3TmpBM01URXdNRm93ZlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFVcHBibWN4RURBT0JnTlZCQWNUQjBKbGFVcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaVGVYTjBaVzB4SURBZUJnTlZCQU1UCkYydDFZbVV0WTI5dWRISnZiR3hsY2kxdFlXNWhaMlZ5TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEEKTUlJQkNnS0NBUUVBNVdnWTgrVEs0L3FnZnJUVjZzdUZyaHFIaFB3ajBFNmh5c3p0OEcwUnkxSThaTFh1ZDZ4bApLTS9wRit6N1N0UmxsYUI1WEhqbFF1Yi9majNSc2FyQ3ZtaWJJdWdqb0hjTUJPRnEvNFpISUFIY2lyU2M2bmRzCnNEU1hsTW1ZQmJ5VGZJb2lXeS9Ta3MvY2JJL1ViV0Q1bVRGd21UNFRpUDg4TUtPNGpHTEFlazczUndZdUdTWnIKY2JsRllnaGM1ODB3ZWZHcXUwVkRGV3BoVDQyM3pma3RwL1gwbUtqS1NsVzE1VjFTWHI3QSszY2dNbDFEbEdRUApTV3B6TXI3a1EvUDdJUUFsWi9NUWhWSzJwcHhLRkVTNEJiR0pQTGFGZ0ZoYy9rN0hsOElJZ21aZlpGMStMeXJQClVZNDF4QkM4RVpRR3oxQVV3VllHMFdzVDNjRTQ1NUZDSHdJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUMKQmFBd0hRWURWUjBsQkJZd0ZBWUlLd1lCQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBdwpIUVlEVlIwT0JCWUVGUGVuSDdZMWwvWkNNMmxsN2dXZWJVMnJuNUdqTUI4R0ExVWRJd1FZTUJhQUZQckMydjBUCm5zM2VQZmM1TURxdlV3KzVQdTJ2TUEwR0NTcUdTSWIzRFFFQkN3VUFBNElCQVFCNm8wYlJUUldDWWlleEVwTzUKNlI3Q3VpQ3EvK05Hamp0VjZ4SFhpWVVXQlF3TGlmWEtmcHNzWHhONXUrSU9uZGQ0d1JvU1BsRHJwWW5BV3FwOApkeHFKVEZtaHNIR2s3dnV1WklPeFkwc0NDcGdYNEpoclV0dkpYSXcyamc1ZTIzUzhtd2JwQ3hvWmxTc0NINURNClZ4UUVIVVRBVWRYTDRoQzRtNk1MSEc5NDFEenZZbDFaSjJBakYrUXZuWm9nM05ITDN5dXJaUVdodUtlaGdoOVMKRDM1T3lpdk53emVKRkpMUjVnSTB6K1hyMmFTejM3ODdKdm41b2xjek5kUS9Ecm9Fcms0WDJIUXY2eFRLUEZZcwpRVVlPbXJhbHhzOW43RFcwekJXTVRVZE55QUxHT21HZUJsZWgyRXNHak0rd3RwNnk1eTZVbk1FNFRLdHBxV1R3CldaOXEKLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBNVdnWTgrVEs0L3FnZnJUVjZzdUZyaHFIaFB3ajBFNmh5c3p0OEcwUnkxSThaTFh1CmQ2eGxLTS9wRit6N1N0UmxsYUI1WEhqbFF1Yi9majNSc2FyQ3ZtaWJJdWdqb0hjTUJPRnEvNFpISUFIY2lyU2MKNm5kc3NEU1hsTW1ZQmJ5VGZJb2lXeS9Ta3MvY2JJL1ViV0Q1bVRGd21UNFRpUDg4TUtPNGpHTEFlazczUndZdQpHU1pyY2JsRllnaGM1ODB3ZWZHcXUwVkRGV3BoVDQyM3pma3RwL1gwbUtqS1NsVzE1VjFTWHI3QSszY2dNbDFECmxHUVBTV3B6TXI3a1EvUDdJUUFsWi9NUWhWSzJwcHhLRkVTNEJiR0pQTGFGZ0ZoYy9rN0hsOElJZ21aZlpGMSsKTHlyUFVZNDF4QkM4RVpRR3oxQVV3VllHMFdzVDNjRTQ1NUZDSHdJREFRQUJBb0lCQVFEVGlYbjQ3REJxcU9EMQo5YXFNSjcvTkc0bDdoMFUvQUVNUXpvZFovRGs4VTBoOVZZWGZ0SWhUYWVSMnUzKzlNTDI3aTQ1ZFJ0MmhJNERVCjJBeFUyREZiZ3ZvSzVpUjBBMUtCN1pyTXBQVlEvbVp2UUx5eE9BNXhMUTNabFVzcGZ3cEEvTjlSVm5mR0NRWW8KMVRmODVEOUVrK0pRYkgxM0JtUnFOWTRuWmFnM0hucTRKdC9UcnJSWk1QeHIzdjNhUDM5ekRvMnhRbkFwMTYwdgpJaWVhQzd0Tnd3VVQrNzZycUVadnhYZGVzczlyL0g2cjFUbGg1SFZnQzhuS24wRzUySHphOHpwUVIrYzFIeTVzCnZlalJpSWkyNVJubWU3VVZzWFJKTTlWaUJlczROb3g3UjR3VkdJRU5OdmxiY3d1RWZXYVREeERBd3V6NkhrcUUKWkZVOVQxQ0JBb0dCQU95cXNxbklWY0REbUs5RlZ2d2Y1M3hQWXV6Zll3TzNnODNmeFZjZ21FbkVOUlRGV1B4SQpXN2ZQbTkyWldRVEFOWGlITm1WNXdxRzdFcXdOUUt2TmlzNUxuUjBqUk5XdGVxa2xWWHBqRGQ2NzVKVy8wcEpaCjlia29yUlh5dGpnVG1aUzdONXl4RXFnRmZsMGNFZGJvM01YcEo1bzJlU3Zvd1dGYVhwMHJvS21sQW9HQkFQZ2wKa2o4QVRONzR5L21PZ3o5VHF3aStRWGlGcXpTSnVHT09Xb3cxRHFicW15MW0vM25MbHJBYS9mUEZTVmdQU1JEQwpDMlVvSUs5OFR3K0J4TzNlRWFUOWxFajdad2ZSZzNNd3RBd0VnaXpjbmxEbjBrZEI0REVLUXVDb2hkUFVaSWMyCmxjQ1EvRFBoTlcrRmNaMXExSHl0aWY3N2tDT2wybWtZK2tHVTRVbHpBb0dBRHpUc28vSW1hR3RvL1NJVWM4RE4KQy9UQjQzeDdEVHNXY2YwRjNoSlBGclpQdnRUcllkSjRhamdoeUx4WXR2QnV2eDdaQk80czdsMXAxcnBIUklMQgpmMzNtUzMvL3BVY3ZVWHovb0F5TFVKdDhGWThzeFpDWU5GeUR1cHhNencrYlY2NHI1WnFQRzFLM0N0NkoydWc5CmYwMzY2SExGbUdldFBVY2tPeThaZEswQ2dZQXM5NEg4OEt6OWF0QnJ0S3VMK2psd0tDbnRFU3ZwSlZ2SWpxOVIKNFB0NnUrREs1WE0rT3VwZmwwU1Z2QmFDWXFLMjZyTHQ3Y3VlZ1VSQ1p4MnNqU1ZkWktaT1kyQlVSbDh2ckkrego3YzA1Ry9HRWI3M25NOFRRbmk5b1RxR1J0VmRTT1U0QnkwUW9rcE1BVm9vMElIdkk3Qm1wbnlTTGtTNTNCUk8wCmRxb3NpUUtCZ0dxNUJvSmx0MkYrRmtHcnVsR1F2ZTVkc0YyYlZrOW1KQlpLa2tOR0JsUDV2dVZ6ZTBlN1M4N1kKU1VqNTEyMXBvVndSb3BESVpSVGRVUmtsRDRkbmo0bUVLdSs5bDFmWGVvRWhna0tNMjlkdW16SW9oeVBUbGRoMgpXc29MZkowL0EwYUNtWS9RaFZycjJqTnQ0aDdvV3o2OXhCZ0daZ3FEclBwZnR1OGdqNkljCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==

systemd管理kube-controller-manager

[root@master k8s]# vim /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动并设置开机自启

[root@master k8s]# systemctl start kube-controller-manager

[root@master k8s]# systemctl enable kube-controller-manager

[root@master k8s]# systemctl status kube-controller-manager

● kube-controller-manager.service - Kubernetes Controller Manager

Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 15:38:05 CST; 1min 0s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 6024 (kube-controller)

Tasks: 5

Memory: 34.7M

CGroup: /system.slice/kube-controller-manager.service

└─6024 /opt/kubernetes/bin/kube-controller-manager --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs --leader-elect=true --kubeconfig=/opt/kube...

Jan 09 15:38:05 master systemd[1]: Started Kubernetes Controller Manager.

Jan 09 15:38:06 master kube-controller-manager[6024]: E0109 15:38:06.281840 6024 core.go:231] failed to start cloud node lifecycle controller: no clou...provided

Jan 09 15:38:16 master kube-controller-manager[6024]: E0109 15:38:16.318733 6024 core.go:91] Failed to start service controller: WARNING: no cloud pro...ill fail

Hint: Some lines were ellipsized, use -l to show in full.

部署kube-scheduler

创建配置文件

[root@master k8s]# vim /opt/kubernetes/cfg/kube-scheduler.conf

KUBE_SCHEDULER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect \

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \

--bind-address=127.0.0.1"

• --kubeconfig:连接apiserver配置文件

• --leader-elect:当该组件启动多个时,自动选举(HA)

生成kubeconfig文件

配置kube-scheduler证书申请文件

[root@master k8s]# pwd

/root/TLS/k8s

[root@master k8s]# vim kube-scheduler-csr.json

{

"CN": "kube-scheduler",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

生成证书私钥公钥

[root@master k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

2023/01/09 15:50:35 [INFO] generate received request

2023/01/09 15:50:35 [INFO] received CSR

2023/01/09 15:50:35 [INFO] generating key: rsa-2048

2023/01/09 15:50:36 [INFO] encoded CSR

2023/01/09 15:50:36 [INFO] signed certificate with serial number 273853252319033525506387231063438124448184112200

2023/01/09 15:50:36 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master k8s]# ll

-rw-r--r-- 1 root root 1021 Jan 9 15:50 kube-scheduler.csr

-rw-r--r-- 1 root root 296 Jan 9 15:50 kube-scheduler-csr.json

-rw------- 1 root root 1679 Jan 9 15:50 kube-scheduler-key.pem

-rw-r--r-- 1 root root 1411 Jan 9 15:50 kube-scheduler.pem

生成kubeconfig文件

[root@master k8s]# KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

[root@master k8s]# KUBE_APISERVER="https://192.168.54.10:6443"

[root@master k8s]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

Cluster "kubernetes" set.

[root@master k8s]# kubectl config set-credentials kube-scheduler \

--client-certificate=./kube-scheduler.pem \

--client-key=./kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

User "kube-scheduler" set.

[root@master k8s]# kubectl config set-context default \

--cluster=kubernetes \

--user=kube-scheduler \

--kubeconfig=${KUBE_CONFIG}

Context "default" created.

[root@master k8s]# kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

Switched to context "default".

查看生成的配置文件

[root@master k8s]# cat ${KUBE_CONFIG}

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUR2akNDQXFhZ0F3SUJBZ0lVWVNlOTJPenNLN0hGaFhxSjJvNWczU2I0d1owd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyczRjekVQTUEwR0ExVUVDeE1HVTNsemRHVnRNUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEl6TURFd09ERTFNamN3TUZvWERUSTRNREV3TnpFMU1qY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RERBSwpCZ05WQkFvVEEyczRjekVQTUEwR0ExVUVDeE1HVTNsemRHVnRNUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBeFNrWXJaS0NxbkxFN1RlMlFqQkUKRGt4Tk9COHJrQTFUOHdQZGc0TTVxc0lsb090bENaQlpMd1RpV0hoeklHaDhuWExBcWdwYW1samg4SGhCa0ozWAp0eENSdGxJTDNPTkRHM3plVFZ2WVNUclN6aVowc1pzQTlSaVNqSW5MLzNvRHd4TUxnSUdxQmFZUDBkTzUvb2UyCmJmWm1iM1Vka08vWE5EeFNBTWxCWHhEWlZiaTJtMXZadEpYeUliQmcvRjNqYmpsMjRHSDY2aTlwckRNM1RUV2UKK0d1MmxaaUJDcHNtUHJuOFVJNTM1N2tQTlRnVldlT0U2ak1KVDhPQUdzZmdmN01ET1FzZWR0WlVhOERha0gyYwpsWDZrL3FZYWt2enk2bXk4MjRFbU1qd01hK2lxaWNOVTkvNS9CaFlsTmE1MkR2SUU1M3lnR25TSWp5YThCeDFICnZ3SURBUUFCbzJZd1pEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0VnWURWUjBUQVFIL0JBZ3dCZ0VCL3dJQkFqQWQKQmdOVkhRNEVGZ1FVK3NMYS9ST2V6ZDQ5OXprd09xOVREN2srN2E4d0h3WURWUjBqQkJnd0ZvQVUrc0xhL1JPZQp6ZDQ5OXprd09xOVREN2srN2E4d0RRWUpLb1pJaHZjTkFRRUxCUUFEZ2dFQkFISnFIRkExelNQcnYxVG5HN3pxCkRGdGJRLzRycXZOU3lFYUNsWXRRcmtiZjhCdTBhN21vN0Q4WmlnUE1FcncrWE9HUnJETGZUQ0kvSTNOVG83bWwKQ1JmQXIwU1I0cnZYcyt4bmhYWGgxQnBCSlVMYWM4ZDhFTEtsN1pROFIyWGhtNnV6b2pWYjNLOStlL3NmZ2w2awpWLzJqdFB4aHBZZ0h6N0xDclMyd2xvYU1RWWQyZjVwRDM4TEJQVm5sTXlIYk1qbllBUHZFNGFOWndGdlR2N2R0CndTZ3pQZ1QzS0p3SXJpeDlYQmp4Qk81RkRzdFBQcFJydnY1YUs5TzR0bFVVN01pK2Q1aFJpdThzY1ptTDNLZ1AKdnU3bkJETjM0cHpFT3FuNFBScVZzNHNTMkV4VGNzbFk4NEpRRS8yTkxpT0J2eDJ1QklYU3NIdS9YWnBhaVZVKwpBeFk9Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://192.168.54.10:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kube-scheduler

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kube-scheduler

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQ1akNDQXM2Z0F3SUJBZ0lVTC9nQkthTnAvWlhvUzFJVzI4RGoyNHhwbEVnd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyczRjekVQTUEwR0ExVUVDeE1HVTNsemRHVnRNUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEl6TURFd09UQTNORFl3TUZvWERUTXpNREV3TmpBM05EWXdNRm93ZERFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFVcHBibWN4RURBT0JnTlZCQWNUQjBKbGFVcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaVGVYTjBaVzB4RnpBVkJnTlZCQU1UCkRtdDFZbVV0YzJOb1pXUjFiR1Z5TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUEKeU9aVlFEN1RHcGdCTWxVTUpkMllOREYrekQ0MytWRW9ackxTL0xkcnorUkljZnRNa0RUb1h3YUFhVlF4c3lPKwpVc2FPNTdVMXdOcmtGTXpNU2hkaGlpV0prQUhDdUxqMm81S2tFRFBUSFJZY0lFLzhlVWZMYzlJUkxRVkY4enJVCkVmWEpVcHlNeVkyUDUzUkd1K3ZsZFQwTlA4NS9DdTMzekxvSjg2c3dTeGxJUnJETXJENEloK0RKcVBwRGpzOVcKaHdMUkJ3Qlc3WGxHOFg4RGw1Ymd1TEt5cURJcWNNa08wTUNOeTNTaEdGMVJwS2c4bDhDTWdOZWdkSHlWMTZiRgowNjFncmUwd3hyeXFSVTJIaGZsK2l4QjYxVnN4ZnFoN3QvNExZeVh6VXpDMzN1MXpVMUM4R3VQclVIdzJybEdqCm13b2JrS2NlTFgrVGtmQWdnTkxBY1FJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUNCYUF3SFFZRFZSMGwKQkJZd0ZBWUlLd1lCQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBd0hRWURWUjBPQkJZRQpGQzZpNTFTbnZVQVZMRGJERHUyZlFTTEdFWDhsTUI4R0ExVWRJd1FZTUJhQUZQckMydjBUbnMzZVBmYzVNRHF2ClV3KzVQdTJ2TUEwR0NTcUdTSWIzRFFFQkN3VUFBNElCQVFCWXRoeHYvWWcvR25NR1ZJNk1HMjBHMjFQZnAvUmIKSUZpZ2NHWmNnNUJCdGduL2llUFVITlg3d0IwME9DSkg2OUUyUDBZRmFIS2NSa2pWUFZFTExNbFVISUlnRjJSLwplSGVwTzYvYTZYYkVVSlQvY1Q0YlVRaXZoSG9JYzFhcEdCMW82cVFSSkFJMzNqK00rRzg5dUJ5b2NHbStqR0kwCkJsR3pvdHVNRHUzZG1KcmdHallnS3BzYTRMSzJmS1A1NTR1VEh4OHppZGg2QWg0RHA3T3ptNXFHNGowNy9xTC8KTW0ySG10UEp5SEVrdjdoUENTeExKQnNnbGNsRXlWdlZCdFBKeW14TURLWmQrb3R3Y2ZQQ3VHbFpvZjBIdkJLYgpoZS9GWVgrMWFESUswQWlnenMrSG4vblJCdi9vc0xHd0grRTgvU0krTFBBcEZjOTkyRWZOM2kzZQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBeU9aVlFEN1RHcGdCTWxVTUpkMllOREYrekQ0MytWRW9ackxTL0xkcnorUkljZnRNCmtEVG9Yd2FBYVZReHN5TytVc2FPNTdVMXdOcmtGTXpNU2hkaGlpV0prQUhDdUxqMm81S2tFRFBUSFJZY0lFLzgKZVVmTGM5SVJMUVZGOHpyVUVmWEpVcHlNeVkyUDUzUkd1K3ZsZFQwTlA4NS9DdTMzekxvSjg2c3dTeGxJUnJETQpyRDRJaCtESnFQcERqczlXaHdMUkJ3Qlc3WGxHOFg4RGw1Ymd1TEt5cURJcWNNa08wTUNOeTNTaEdGMVJwS2c4Cmw4Q01nTmVnZEh5VjE2YkYwNjFncmUwd3hyeXFSVTJIaGZsK2l4QjYxVnN4ZnFoN3QvNExZeVh6VXpDMzN1MXoKVTFDOEd1UHJVSHcycmxHam13b2JrS2NlTFgrVGtmQWdnTkxBY1FJREFRQUJBb0lCQUF0ekRSSy9RZHEzSlFKUQpWSVBuOEIreFhtK1hjQ3MyVTk0ZWZPWElNazNEemRrcElFRHJzdjZQYVV3WGIwbXRWTkIwM25vWUdyc2wvbSt0CkNFdUVyNXRtN2tNVnhwb3VlR2YwR0lPUDRJMDgwRmVMRjNGMkJRTlJ5b2JOVVNJK2pRMkUrM2RJMHNFOTN5Q3EKd01rKzlYSE1DL0JCL1gySytGOWpqdU9qTXZwa21aTW5ZM25LRVQrYjJDbnZ5SEZIS3VmVmlwVUI5ajJnMjRXRQp0aTJPcUQrR2w4OEU4MkFGaDBkWDN3VEhwS3dIMy9hcG1pTFBNM1pZRnM0Sm1LaVJzMEIwakY3OXlmU2pPTmQwClUyY2x3NTZPbVdLNWp0ckRnUUhjc3JaeG5xS3R2a3FFbWsyVGk1aGtNUit4dWFid1VGSXU4cWl0dDQrNDV1ckQKM0luRE0yMENnWUVBOFFtSDljQ1k4ZUlZcmZBRHpNbDlVcDc2dnROdkZGTlpCbFNsWW92bnF2SGM4NkJOYkt0SgpOVEFuNXc3UmJEcDdJUlJIcXU1OEhEYk9HZ3lad1ZPVDBWZWJrblNkRWpWQnZYY3h0cGNkUmVQb3dkaTJ1N1ZYCkJFWkxXRWs2VnpxV1B6cXpzM0QwU2tuVlRHdkphK0habTF3NC9oR2tMeFZuc2dNczJxZCtoNHNDZ1lFQTFWN3oKNGdNTWNnamlwakNqZ09rZ0d0NFAva2wybEt4cmJuRFV0d2RnclBUbnYrRkF3N3lHOVRDRmtua0N5ajFTelh4VApGSXZGalFuN2RzVVM5M1hjaTc1Y3U0czFVdFh3dHIraWs4TWVwMEYrZjgwM1Rhci9wUCtxa29qZGZDSzRzeWhQCm9GZlp1Ymhsb2RreG96U0VKV2poNnlUc1RJMi9BaU1yT244M04zTUNnWUVBN28wTlJ6Wlc2RVZwUVhRaWZxSUgKYXlhMmFQZmVucElpc0haRHZEVlVrY1dQZEhwNVJneDdocTFqUUhWVTVMVTRPVFBWL2lETEtpMC9hMTUvS1d1cgpCdXVhcDZiTDhVSk9EdEtSbS9FUTRxTytMMk5vN250NVpGeWhvdjNPUkpoU0xML1BLOCtscG9ST0dyVXVncHZpCmZyVVdIcldjOVpCTXNVd2RMMFhIbnlNQ2dZRUFtNkNUTCtGYlhYMS9td25VNS95aHp4YnpBVjBoNFpUVkV3dTMKQ3Z5VmxmRlhhNHZuU2gwakxvbENrN0F4eWNMcXR6Z2IvTnRwcnRKK0dJWHJySlRKMVI5MjBjL2FoOTNGb2ZXcQpwaTNtR01aYmR1bitrV2JNNmRNVTNhWjRMY2ZCZ2VOQUdNcWE0cXhOYkx4WFNSdlAydDFpRXJtdXBMT3FndXVWCjV5Zk01V01DZ1lBcnFGd1dldFZDRFBHRXlteC9NNWFYajkxS2NzUnJkVmZ1d3FGYm4xS1J0eXlvMm1rOW5qNzYKRGZOcEJJdjljWlNRSUZKRmtZTy9JMk5hNXFLTkVDb3NKVzlSeXkxNFV0U1M5R3kxcUpUMFRwUEdtQ2hRMEJmRQpqSkwwSE9XcFJJUjIrcVJ4RUdHTzEyNGFpaGRSbmxsai83NTVyT1FKeWZwNU4rSUQrc1Z2M3c9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

systemd管理scheduler

[root@master k8s]# vim /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

启动并设置开机自启

[root@master k8s]# systemctl daemon-reload

[root@master k8s]# systemctl start kube-scheduler

[root@master k8s]# systemctl enable kube-scheduler

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

[root@master k8s]# systemctl status kube-scheduler

● kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 15:57:39 CST; 11min ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 13149 (kube-scheduler)

CGroup: /system.slice/kube-scheduler.service

└─13149 /opt/kubernetes/bin/kube-scheduler --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs --leader-elect --kubeconfig=/opt/kubernetes/cfg/ku...

Jan 09 15:57:39 master systemd[1]: Started Kubernetes Scheduler.

查看集群状态

配置kubectl连接集群证书

[root@master k8s]# vim admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

生成证书私钥公钥

[root@master k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

2023/01/09 16:17:55 [INFO] generate received request

2023/01/09 16:17:55 [INFO] received CSR

2023/01/09 16:17:55 [INFO] generating key: rsa-2048

2023/01/09 16:17:55 [INFO] encoded CSR

2023/01/09 16:17:55 [INFO] signed certificate with serial number 378984454797438508993474395356486252311422627389

2023/01/09 16:17:55 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master k8s]# ll

total 84

-rw-r--r-- 1 root root 1009 Jan 9 16:17 admin.csr

-rw-r--r-- 1 root root 287 Jan 9 16:15 admin-csr.json

-rw------- 1 root root 1675 Jan 9 16:17 admin-key.pem

-rw-r--r-- 1 root root 1399 Jan 9 16:17 admin.pem

生成kubeconfig文件

[root@master k8s]# mkdir /root/.kube

[root@master k8s]# KUBE_CONFIG="/root/.kube/config"

[root@master k8s]# KUBE_APISERVER="https://192.168.54.10:6443"

[root@master k8s]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

Cluster "kubernetes" set.

[root@master k8s]# kubectl config set-credentials cluster-admin \

--client-certificate=./admin.pem \

--client-key=./admin-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

User "cluster-admin" set.

[root@master k8s]# kubectl config set-context default \

--cluster=kubernetes \

--user=cluster-admin \

--kubeconfig=${KUBE_CONFIG}

Context "default" created.

[root@master k8s]# kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

Switched to context "default".

所有组件都已经启动成功,通过kubectl工具查看当前集群组件状态

[root@master k8s]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

授权 kubelet-bootstrap用户允许请求证书

[root@master k8s]# kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

部署Worker Node

下面还是在Master Node上操作,即同时作为Worker Node

将二进制文件拷贝到工作目录

[root@master ~]# cd kubernetes/server/bin/

[root@master bin]# cp kubelet kube-proxy /opt/kubernetes/bin/

部署kubelet

创建配置文件

[root@master k8s]# vim /opt/kubernetes/cfg/kubelet.conf

KUBELET_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs/ \

--hostname-override=k8s-master1 \

--network-plugin=cni \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet-config.yml \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=10.0.54.200/library/pause:3.2"

• --hostname-override:显示名称,集群中唯一

• --network-plugin:启用CNI

• --kubeconfig:空路径,会自动生成,后面用于连接apiserver

• --bootstrap-kubeconfig:首次启动向apiserver申请证书

• --config:配置参数文件

• --cert-dir:kubelet证书生成目录

• --pod-infra-container-image:管理Pod网络容器的镜像

配置参数文件

[root@master k8s]# vim /opt/kubernetes/cfg/kubelet-config.yml

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /opt/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

生成bootstrap-kubeconfig文件

[root@master k8s]# KUBE_CONFIG="/opt/kubernetes/cfg/bootstrap.kubeconfig"

[root@master k8s]# KUBE_APISERVER="https://192.168.54.10:6443"

[root@master k8s]# TOKEN="0a1dfb3a3df8bd803ed28c6dcf3a7354" # 与token.csv里保持一致

[root@master k8s]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

Cluster "kubernetes" set.

[root@master k8s]# kubectl config set-credentials "kubelet-bootstrap" \

--token=${TOKEN} \

--kubeconfig=${KUBE_CONFIG}

User "kubelet-bootstrap" set.

[root@master k8s]# kubectl config set-context default \

--cluster=kubernetes \

--user="kubelet-bootstrap" \

--kubeconfig=${KUBE_CONFIG}

Context "default" created.

[root@master k8s]# kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

Switched to context "default".

systemd管理kubelet

[root@master k8s]# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

启动并设置开机自启

[root@master k8s]# systemctl daemon-reload

[root@master k8s]# systemctl start kubelet

[root@master k8s]# systemctl enable kubelet

[root@master bin]# systemctl status kubelet

● kubelet.service - Kubernetes Kubelet

Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 17:47:15 CST; 4s ago

Main PID: 48653 (kubelet)

Tasks: 7

Memory: 45.9M

CGroup: /system.slice/kubelet.service

└─48653 /opt/kubernetes/bin/kubelet --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs/ --hostname-override=k8s-master1 --network-plugin=cni --kube...

Jan 09 17:47:15 master systemd[1]: Started Kubernetes Kubelet.

Jan 09 17:47:15 master kubelet[48653]: Flag hi --network-plugin has been deprecated, will be removed along with dockershim.

Jan 09 17:47:15 master kubelet[48653]: Flag --network-plugin has been deprecated, will be removed along with dockershim.

批准kubelet证书申请并加入集群

[root@master bin]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDD

node-csr-0W0Nd0Tmyj-X4GfWBoruKt4Nxum1guiXS6vgdJ_UKjQ 2m15s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none>

[root@master bin]# kubectl certificate approve node-csr-0W0Nd0Tmyj-X4GfWBoruKt4Nxum1guiXS6vgdJ_UKjQ

certificatesigningrequest.certificates.k8s.io/node-csr-0W0Nd0Tmyj-X4GfWBoruKt4Nxum1guiXS6vgdJ_UKjQ approved

[root@master bin]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady <none> 4m19s v1.22.1

部署kube-proxy

创建配置文件

[root@master ~]# vim /opt/kubernetes/cfg/kube-proxy.conf

KUBE_PROXY_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--config=/opt/kubernetes/cfg/kube-proxy-config.yml"

配置参数文件

[root@master ~]# vim /opt/kubernetes/cfg/kube-proxy-config.yml

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig

hostnameOverride: k8s-master1

clusterCIDR: 10.244.0.0/16

生成kube-proxy.kubeconfig文件

配置kube-proxy证书申请文件

[root@master ~]# cd ~/TLS/k8s/

[root@master k8s]# vim kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

生成证书私钥公钥

[root@master k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@master k8s]# ll

-rw-r--r-- 1 root root 1009 Jan 9 21:47 kube-proxy.csr

-rw-r--r-- 1 root root 230 Jan 9 21:45 kube-proxy-csr.json

-rw------- 1 root root 1675 Jan 9 21:47 kube-proxy-key.pem

-rw-r--r-- 1 root root 1403 Jan 9 21:47 kube-proxy.pem

生成Kubeconfig文件

[root@master k8s]# KUBE_CONFIG="/opt/kubernetes/cfg/kube-proxy.kubeconfig"

[root@master k8s]# KUBE_APISERVER="https://192.168.54.10:6443"

[root@master k8s]# kubectl config set-cluster kubernetes \

> --certificate-authority=/opt/kubernetes/ssl/ca.pem \

> --embed-certs=true \

> --server=${KUBE_APISERVER} \

> --kubeconfig=${KUBE_CONFIG}

Cluster "kubernetes" set.

[root@master k8s]# kubectl config set-credentials kube-proxy \

> --client-certificate=./kube-proxy.pem \

> --client-key=./kube-proxy-key.pem \

> --embed-certs=true \

> --kubeconfig=${KUBE_CONFIG}

User "kube-proxy" set.

[root@master k8s]# kubectl config set-context default \

> --cluster=kubernetes \

> --user=kube-proxy \

> --kubeconfig=${KUBE_CONFIG}

Context "default" created.

[root@master k8s]# kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

systemd管理kube-proxy

[root@master k8s]# vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

启动并设置开机自启

[root@master k8s]# systemctl daemon-reload

[root@master k8s]# systemctl start kube-proxy

[root@master k8s]# systemctl enable kube-proxy

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

如果启动的时候出现如下错误:

unable to load in-cluster configuration, KUBERNETES_SERVICE_HOST and KUBERNETES_SERVICE_PORT must be

解决方法就是:检查参数文件格式是否对,再检查kubeconfig文件是否有错误

[root@master k8s]# systemctl status kube-proxy

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 22:02:25 CST; 3s ago

Main PID: 17414 (kube-proxy)

Tasks: 6

Memory: 8.6M

CGroup: /system.slice/kube-proxy.service

└─17414 /opt/kubernetes/bin/kube-proxy --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs --config=/opt/kubernetes/cfg/kube-proxy-config.yml

Jan 09 22:02:25 master systemd[1]: Started Kubernetes Proxy.

Jan 09 22:02:25 master kube-proxy[17414]: E0109 22:02:25.407562 17414 event_broadcaster.go:253] Server rejected event '&v1.Event{TypeMeta:v1.TypeMeta{Kind:"", APIVersion...ation:0, Cre

Hint: Some lines were ellipsized, use -l to show in full.

部署网络组件

Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案

组件配置文件:

https://docs.projectcalico.org/manifests/calico.yaml

创建资源

[root@master ~]# mkdir /opt/kubernetes/yaml/calica -p

[root@master ~]# cd /opt/kubernetes/yaml/calica

[root@master calica]# curl -o https://docs.projectcalico.org/manifests/calico.yaml

修改kube-controller-manager相对应的--cluster-cidr配置

[root@master calica]# vim /opt/kubernetes/yaml/calico/calico.yaml

将

# - name: CALICO_IPV4POOL_CIDR

# value: "192.168.0.0/16"

修改成

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

[root@master calico]# kubectl create -f calico.yaml

poddisruptionbudget.policy/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

serviceaccount/calico-node created

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

deployment.apps/calico-kube-controllers created

查看资源状态

[root@master calico]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-846d7f49d8-chvkn 0/1 Pending 0 18s

calico-node-vhfs2 0/1 Init:0/3 0 18s

授权apiserver访问kubelet

应用场景:例如kubectl logs

创建资源创建文件

[root@master ~]# cd /opt/kubernetes/yaml/

[root@master yaml]# vi apiserver-to-kubelet-rbac.yaml

##创建集群角色

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

- pods/log

verbs:

- "*"

---

#将kubernetes绑定到system:kube-apiserver-to-kubelet集群上

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

创建资源

[root@master ~]# kubectl create -f apiserver-to-kubelet-rbac.yaml

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created

新增加Worker Node

拷贝文件到新节点

在Master节点将Worker Node涉及文件拷贝到新节点

[root@master ~]# scp -r /opt/kubernetes root@192.168.54.20:/opt/

[root@master ~]# scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@192.168.54.20:/usr/lib/systemd/system/

删除kubelet证书与kubeconfig文件

因为都是自动生成的,不删除会冲突

[root@node ~]# rm /opt/kubernetes/cfg/kubelet.kubeconfig

[root@node ~]# rm -f /opt/kubernetes/ssl/kubelet*

修改node节点配置文件

修改主机名

[root@node ~]# vim /opt/kubernetes/cfg/kubelet.conf

--hostname-override=k8s-node1

[root@node ~]# vim /opt/kubernetes/cfg/kube-proxy-config.yml

hostnameOverride: k8s-node1

启动kubelet和kube-proxy并设置开机自启

[root@node ~]# systemctl start kubelet kube-proxy

[root@node ~]# systemctl enable kubelet kube-proxy

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

[root@node ~]# systemctl status kubelet kube-proxy

● kubelet.service - Kubernetes Kubelet

Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 23:06:19 CST; 12s ago

Main PID: 28497 (kubelet)

CGroup: /system.slice/kubelet.service

└─28497 /opt/kubernetes/bin/kubelet --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs/ --hostname-override=k8s-node1 --network-plugin=cni --kubeco...

Jan 09 23:06:19 node systemd[1]: Started Kubernetes Kubelet.

Jan 09 23:06:19 node kubelet[28497]: Flag --network-plugin has been deprecated, will be removed along with dockershim.

Jan 09 23:06:19 node kubelet[28497]: Flag --network-plugin has been deprecated, will be removed along with dockershim.

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since Mon 2023-01-09 23:06:19 CST; 12s ago

Main PID: 28498 (kube-proxy)

CGroup: /system.slice/kube-proxy.service

└─28498 /opt/kubernetes/bin/kube-proxy --logtostderr=false --v=2 --log-dir=/opt/kubernetes/logs --config=/opt/kubernetes/cfg/kube-proxy-config.yml

Jan 09 23:06:19 node systemd[1]: Started Kubernetes Proxy.

在Master上批准新Node kubelet证书申请

[root@master ~]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

node-csr-Tb30lfKLEpUMFsJI4PbxppziPUHaTa3fbZ4fV9P6oJU 75s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

[root@master ~]# kubectl certificate approve node-csr-Tb30lfKLEpUMFsJI4PbxppziPUHaTa3fbZ4fV9P6oJU

certificatesigningrequest.certificates.k8s.io/node-csr-Tb30lfKLEpUMFsJI4PbxppziPUHaTa3fbZ4fV9P6oJU approved

部署Dashboard

Dashboard 是一个基于 Web 的 Kubernetes 用户界面。您可以使用 Dashboard 将容器化应用程序部署到 Kubernetes 集群、对容器化应用程序进行故障排除以及管理集群资源。您可以使用 Dashboard 来概览集群上运行的应用程序,以及创建或修改单个 Kubernetes 资源(例如 Deployments、Jobs、DaemonSets 等)。例如,您可以扩展 Deployment、启动滚动更新、重启 Pod 或使用部署向导部署新应用程序

github:https://github.com/kubernetes/dashboard

官方文档:https://kubernetes.io/docs/tasks/access-application-cluster/web-ui-dashboard/

注意:每个版本的dashboard适配的kubernetes版本不一样,版本不适配回报错

下载dashboard资源清单

[root@master ~]# cd /opt/kubernetes/yaml/

[root@master yaml]# mkdir dashboard

[root@master yaml]# crul -o https://raw.githubusercontent.com/kubernetes/dashboard/v2.2.0/aio/deploy/recommended.yaml

创建资源

[root@master dashboard]# kubectl create -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

查看部署情况

running则部署成功

[root@master dashboard]# kubectl get pod -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-7c857855d9-wtnnb 1/1 Running 0 2m23s

kubernetes-dashboard-658b66597c-cg5p4 1/1 Running 0 2m23s

创建dashboard用户资源清单

[root@master dashboard]# vim dashboard-admin.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: dashboard-admin

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: dashboard-admin-bind-cluster-role

labels:

k8s-app: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: dashboard-admin

namespace: kubernetes-dashboard

创建用户

[root@master dashboard]# kubectl apply -f dashboard-admin.yaml

serviceaccount/dashboard-admin created

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin-bind-cluster-role created

获取登录dashboard服务的token

[root@master dashboard]# kubectl get secrets -n kubernetes-dashboard

NAME TYPE DATA AGE

dashboard-admin-token-gfd2g kubernetes.io/service-account-token 3 9s

default-token-88v4j kubernetes.io/service-account-token 3 10m

kubernetes-dashboard-certs Opaque 0 10m

kubernetes-dashboard-csrf Opaque 1 10m

kubernetes-dashboard-key-holder Opaque 2 10m

kubernetes-dashboard-token-mcm2z kubernetes.io/service-account-token 3 10m

[root@master dashboard]# kubectl describe secrets -n kubernetes-dashboard dashboard-admin-token-gfd2g

Name: dashboard-admin-token-gfd2g

Namespace: kubernetes-dashboard

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: 06d95498-9f06-426c-b45c-f8aa36a88d30

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkVRMnZESFdrbGZwOFBhQ19DUEx2aWJpbjd4ZlU2NXY2ckFCWWc4RGZhZmMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tZ2ZkMmciLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiMDZkOTU0OTgtOWYwNi00MjZjLWI0NWMtZjhhYTM2YTg4ZDMwIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.RqEIUKg735hD05MEPPr3R7NBtpZ3M2g68gPrF3uFG6SWYdKzcngpJHxMCu5Z7_85eliRMXTLlztGd30aotKaJk6JdEuBm5-k_Q_PUmJk5vJ7ygeO5Lchz45x7JmJFRqXxYQzw2gwmGu47CRLu5iRgvUOmEeyF_BprD8Gt4nxk01oYZ7b19rMZQ9z5R7dw4x4-b-DTBIhAO8a40rVf1L1nbQkB1GvTakYmKrD0qwPf5CABaIcChsu7WoDAO5n392QvWlP_9qzlB3Awyl2Y6Sk3SGJlLL8VDGMkrxN_vNoALhMpHMfh-4J_Ra-DXehSYJ8f0f5_la40n4zM5mz2OldFg

ca.crt: 1359 bytes

namespace: 20 bytes

修改网络服务类型为NodePort

使集群外能访问dashboard

[root@master dashboard]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 11h

[root@master dashboard]# kubectl edit svc kubernetes

type: NodePort

查看对外开放的端口

[root@master dashboard]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes NodePort 10.0.0.1 <none> 443:32078/TCP 11h

访问dashboard

https://192.168.54.10:32078

文章评论