Docker跨服务器通信Overlay解决方案之Consul单实例

任务:容器可以不在同一台服务器上,互相调用

思路:

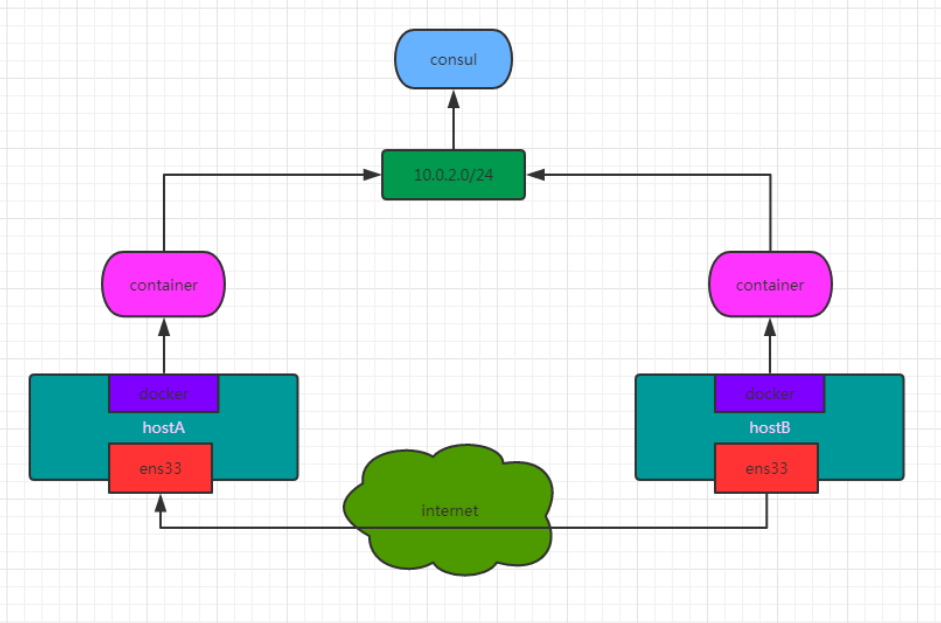

想要实现Overlay网络,需要引入一个K-V数据库,来保存网络状态信息,

想

想

包括 Network、Endpoint、IP 等。Consul、Etcd 和 ZooKeeper 都是 Docker 支持的K-V数据库

我们这里使用 Consul,相比其它K-V数据库,Consul提供的界面化方便管理,所以这里使用Consul实现Overlay

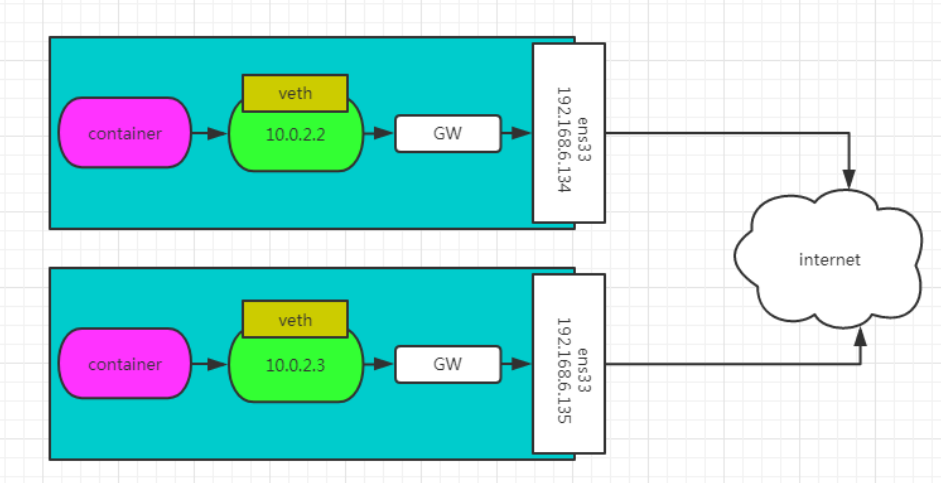

通过让每个服务器的Docker daemon将自己的IP注册到Consul中,来共享Docker内网,这里共享的内网是

Overlay网络模式的,也只有在注册的Docker环境下使用同overlay网络的容器,才能互相通讯

创建完成后,不使用overlay网络的跨服务器容器,不能ping通*

| 服务器OS | 主机IP | Docker版本 | 网卡名 |

| --------- | ---------- | ---------- | ------ |

| centos7.9 | 10.0.54.21 | 23.0.3 | ens33 |

| centos7.9 | 10.0.54.22 | 18.09.7 | ens33 |

| centos7.9 | 10.0.54.23 | 18.09.7 | ens33 |

注意事项:

高版本不能使用 cluster-store与cluster-advertise,请降到适当的版本进行实验

#分别设置3台

1、每台运行docker的主机都不能同hostname,可以使用

hostnamectl set-hostname docker-zh54-1

hostnamectl set-hostname docker-zh54-2

hostnamectl set-hostname docker-zh54-3

2、关闭selinux

setenforce 0

3、关闭防火墙

systemctl disable firewalld && systemctl stop firewalld

拉取consul的镜像

[root@docker-zh54-1 ~]# docker pull consul:1.5.2

1.5.2: Pulling from library/consul

e7c96db7181b: Pull complete

2967157a1cec: Pull complete

89eac26c7594: Pull complete

fed432a284a5: Pull complete

eff914b7f5d7: Pull complete

0c1d0a78f0c3: Pull complete

Digest: sha256:b31edc821d5e3deae8ce9f9a2070dc3fbaa72f5e1746a85a91ebe551ed8fb17f

Status: Downloaded newer image for consul:1.5.2

docker.io/library/consul:1.5.2

consul服务器建立

[root@docker-zh54-1 ~]# docker run -d --network host -h consul --name consule --restart always -e CONSUL_BIND_INTERFACE=ens33 consul:1.5.2

1b293c1d4f86f16da502487d815584b3e91d4ad994e2d93201f8d2bf0e22ea64

测试:

10.0.54.21:8500

其他两台测试机器配置集群地址:

其他两台测试机器配置集群地址:

[root@docker-zh54-2 ~]# cat /etc/docker/daemon.json

{

"cluster-store": "consul://10.0.54.21:8500",

"cluster-advertise": "ens33:2375"

}

[root@docker-zh54-2 ~]# scp /etc/docker/daemon.json root@10.0.54.23:/etc/docker/

[root@docker-zh54-2 ~]# systemctl restart docker

[root@docker-zh54-3 ~]# systemctl restart docker

测试:

1、新建一个overlay网络

[root@docker-zh54-2 ~]# docker network create --driver overlay zh54

313b6f3af43631a704cfb38847977fcf00603814b33ae0ebd822493b61daad35

[root@docker-zh54-2 ~]# docker network list

NETWORK ID NAME DRIVER SCOPE

6ff8ce0771b7 bridge bridge local

accfd32f1830 host host local

46b450ace1d9 none null local

313b6f3af436 zh54 overlay global

#可以看到docker2创建的zh54网络被同步到了docker3中

[root@docker-zh54-3 ~]# docker network list

NETWORK ID NAME DRIVER SCOPE

477b71be1af9 bridge bridge local

accfd32f1830 host host local

46b450ace1d9 none null local

313b6f3af436 zh54 overlay global

2、在两台服务器上分别新建两个busybox的容器,我们刚才创建的网络zh54使用overlay

[root@docker-zh54-2 ~]# docker run -it --name zh1 --network zh54 busybox

[root@docker-zh54-3 ~]# docker run -it --name zh2 --network zh54 busybox

测试连通性

[root@docker-zh54-2 ~]# docker exec -it zh1 sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

7: eth0@if8: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1450 qdisc noqueue

link/ether 02:42:0a:00:01:02 brd ff:ff:ff:ff:ff:ff

inet 10.0.1.2/24 brd 10.0.1.255 scope global eth0

valid_lft forever preferred_lft forever

10: eth1@if11: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:12:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.18.0.2/16 brd 172.18.255.255 scope global eth1

valid_lft forever preferred_lft forever

/ # ping 10.0.1.3

PING 10.0.1.3 (10.0.1.3): 56 data bytes

64 bytes from 10.0.1.3: seq=0 ttl=64 time=0.409 ms

64 bytes from 10.0.1.3: seq=1 ttl=64 time=1.379 ms

^C

--- 10.0.1.3 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.409/0.894/1.379 ms

/ # ping zh2

PING zh2 (10.0.1.3): 56 data bytes

64 bytes from 10.0.1.3: seq=0 ttl=64 time=0.546 ms

64 bytes from 10.0.1.3: seq=1 ttl=64 time=1.956 ms

^C

--- zh2 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.546/1.251/1.956 ms

[root@docker-zh54-3 ~]# docker exec -it zh2 sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

7: eth0@if8: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1450 qdisc noqueue

link/ether 02:42:0a:00:01:03 brd ff:ff:ff:ff:ff:ff

inet 10.0.1.3/24 brd 10.0.1.255 scope global eth0

valid_lft forever preferred_lft forever

10: eth1@if11: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:12:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.18.0.2/16 brd 172.18.255.255 scope global eth1

valid_lft forever preferred_lft forever

/ # ping 10.0.1.2

PING 10.0.1.2 (10.0.1.2): 56 data bytes

64 bytes from 10.0.1.2: seq=0 ttl=64 time=0.397 ms

^C

--- 10.0.1.2 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.397/0.397/0.397 ms

/ # ping zh1

PING zh1 (10.0.1.2): 56 data bytes

64 bytes from 10.0.1.2: seq=0 ttl=64 time=0.378 ms

64 bytes from 10.0.1.2: seq=1 ttl=64 time=2.291 ms

^C

--- zh1 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.378/1.334/2.291 ms

文章评论