基础大全与工具安装

Kubernetes基础概念

1、是什么

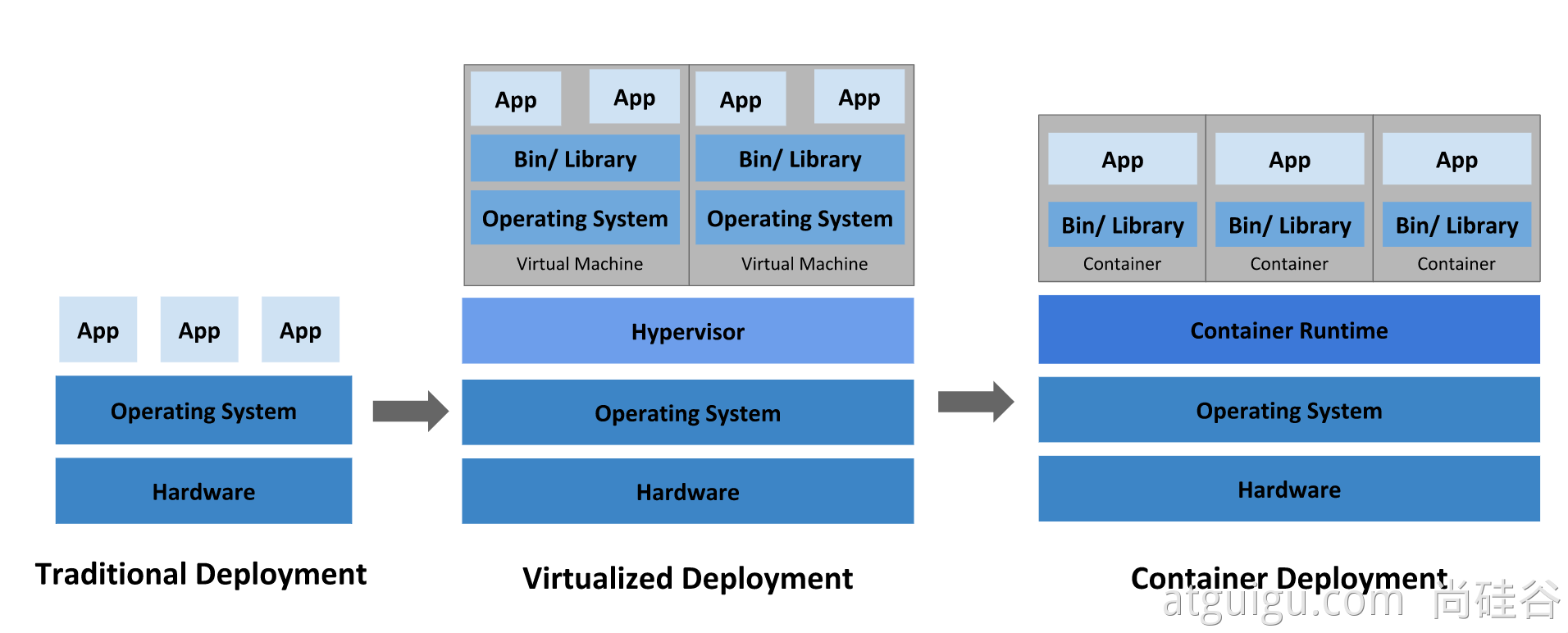

我们急需一个大规模容器编排系统

kubernetes具有以下特性:

- 服务发现和负载均衡

Kubernetes 可以使用 DNS 名称或自己的 IP 地址公开容器,如果进入容器的流量很大, Kubernetes 可以负载均衡并分配网络流量,从而使部署稳定。 - 存储编排

Kubernetes 允许你自动挂载你选择的存储系统,例如本地存储、公共云提供商等。 - 自动部署和回滚

你可以使用 Kubernetes 描述已部署容器的所需状态,它可以以受控的速率将实际状态 更改为期望状态。例如,你可以自动化 Kubernetes 来为你的部署创建新容器, 删除现有容器并将它们的所有资源用于新容器。 - 自动完成装箱计算

Kubernetes 允许你指定每个容器所需 CPU 和内存(RAM)。 当容器指定了资源请求时,Kubernetes 可以做出更好的决策来管理容器的资源。 - 自我修复

Kubernetes 重新启动失败的容器、替换容器、杀死不响应用户定义的 运行状况检查的容器,并且在准备好服务之前不将其通告给客户端。 - 密钥与配置管理

Kubernetes 允许你存储和管理敏感信息,例如密码、OAuth 令牌和 ssh 密钥。 你可以在不重建容器镜像的情况下部署和更新密钥和应用程序配置,也无需在堆栈配置中暴露密钥。

Kubernetes 为你提供了一个可弹性运行分布式系统的框架。 Kubernetes 会满足你的扩展要求、故障转移、部署模式等。 例如,Kubernetes 可以轻松管理系统的 Canary 部署。

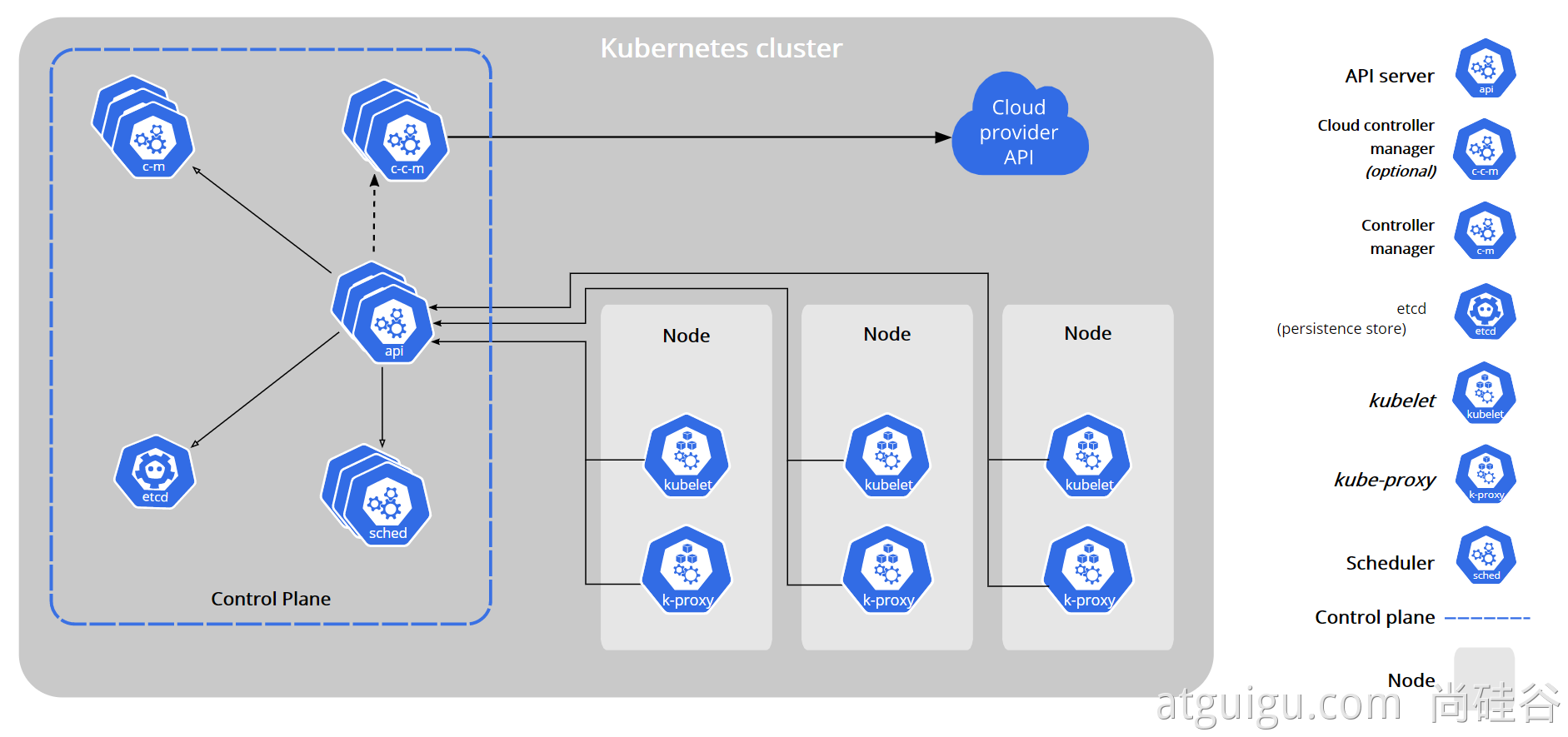

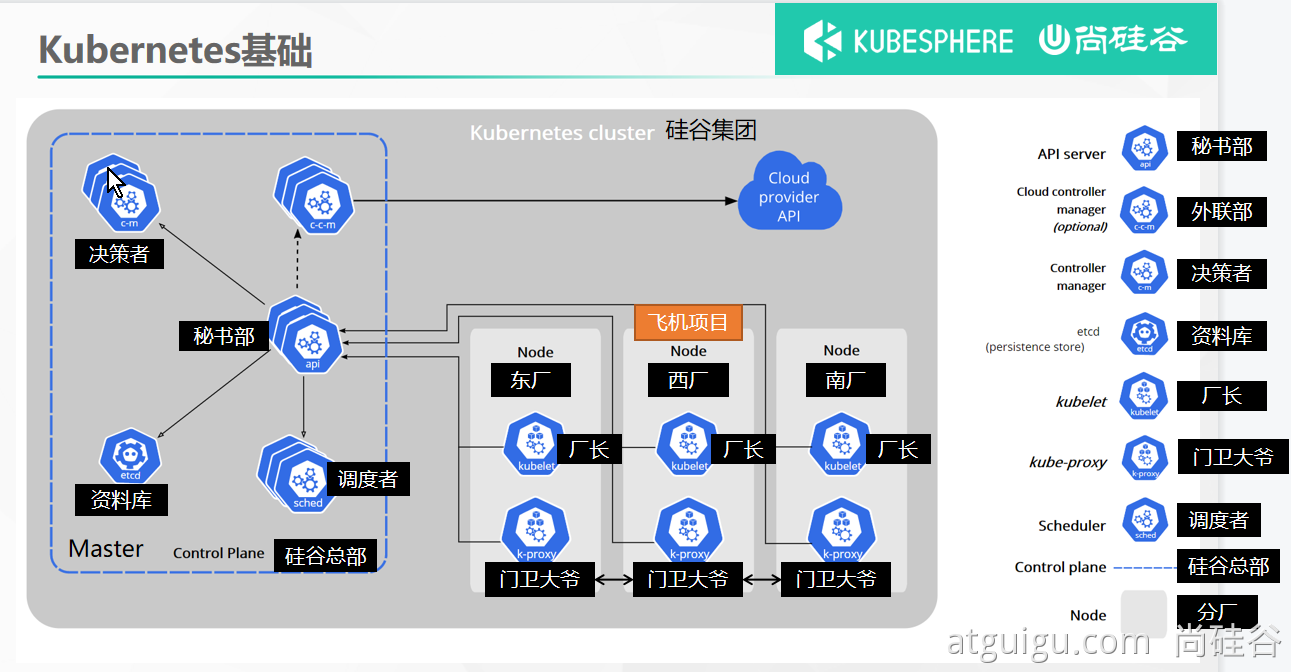

2、架构

1、工作方式

Kubernetes Cluster = N Master Node + N Worker Node:N主节点+N工作节点; N>=1

2、组件架构

1、控制平面组件(Control Plane Components)

控制平面的组件对集群做出全局决策(比如调度),以及检测和响应集群事件(例如,当不满足部署的 replicas 字段时,启动新的 pod)。

控制平面组件可以在集群中的任何节点上运行。 然而,为了简单起见,设置脚本通常会在同一个计算机上启动所有控制平面组件, 并且不会在此计算机上运行用户容器。 请参阅使用 kubeadm 构建高可用性集群 中关于多 VM 控制平面设置的示例。

kube-apiserver

API 服务器是 Kubernetes 控制面的组件, 该组件公开了 Kubernetes API。 API 服务器是 Kubernetes 控制面的前端。

Kubernetes API 服务器的主要实现是 kube-apiserver。 kube-apiserver 设计上考虑了水平伸缩,也就是说,它可通过部署多个实例进行伸缩。 你可以运行 kube-apiserver 的多个实例,并在这些实例之间平衡流量。

etcd

etcd 是兼具一致性和高可用性的键值数据库,可以作为保存 Kubernetes 所有集群数据的后台数据库。

您的 Kubernetes 集群的 etcd 数据库通常需要有个备份计划。

要了解 etcd 更深层次的信息,请参考 etcd 文档。

kube-scheduler

控制平面组件,负责监视新创建的、未指定运行节点(node)的 Pods,选择节点让 Pod 在上面运行。

调度决策考虑的因素包括单个 Pod 和 Pod 集合的资源需求、硬件/软件/策略约束、亲和性和反亲和性规范、数据位置、工作负载间的干扰和最后时限。

kube-controller-manager

在主节点上运行 控制器 的组件。

从逻辑上讲,每个控制器都是一个单独的进程, 但是为了降低复杂性,它们都被编译到同一个可执行文件,并在一个进程中运行。

这些控制器包括:

- 节点控制器(Node Controller): 负责在节点出现故障时进行通知和响应

- 任务控制器(Job controller): 监测代表一次性任务的 Job 对象,然后创建 Pods 来运行这些任务直至完成

- 端点控制器(Endpoints Controller): 填充端点(Endpoints)对象(即加入 Service 与 Pod)

- 服务帐户和令牌控制器(Service Account & Token Controllers): 为新的命名空间创建默认帐户和 API 访问令牌

cloud-controller-manager

云控制器管理器是指嵌入特定云的控制逻辑的 控制平面组件。 云控制器管理器允许您链接集群到云提供商的应用编程接口中, 并把和该云平台交互的组件与只和您的集群交互的组件分离开。

cloud-controller-manager 仅运行特定于云平台的控制回路。 如果你在自己的环境中运行 Kubernetes,或者在本地计算机中运行学习环境, 所部署的环境中不需要云控制器管理器。

与 kube-controller-manager 类似,cloud-controller-manager 将若干逻辑上独立的 控制回路组合到同一个可执行文件中,供你以同一进程的方式运行。 你可以对其执行水平扩容(运行不止一个副本)以提升性能或者增强容错能力。

下面的控制器都包含对云平台驱动的依赖:

- 节点控制器(Node Controller): 用于在节点终止响应后检查云提供商以确定节点是否已被删除

- 路由控制器(Route Controller): 用于在底层云基础架构中设置路由

- 服务控制器(Service Controller): 用于创建、更新和删除云提供商负载均衡器

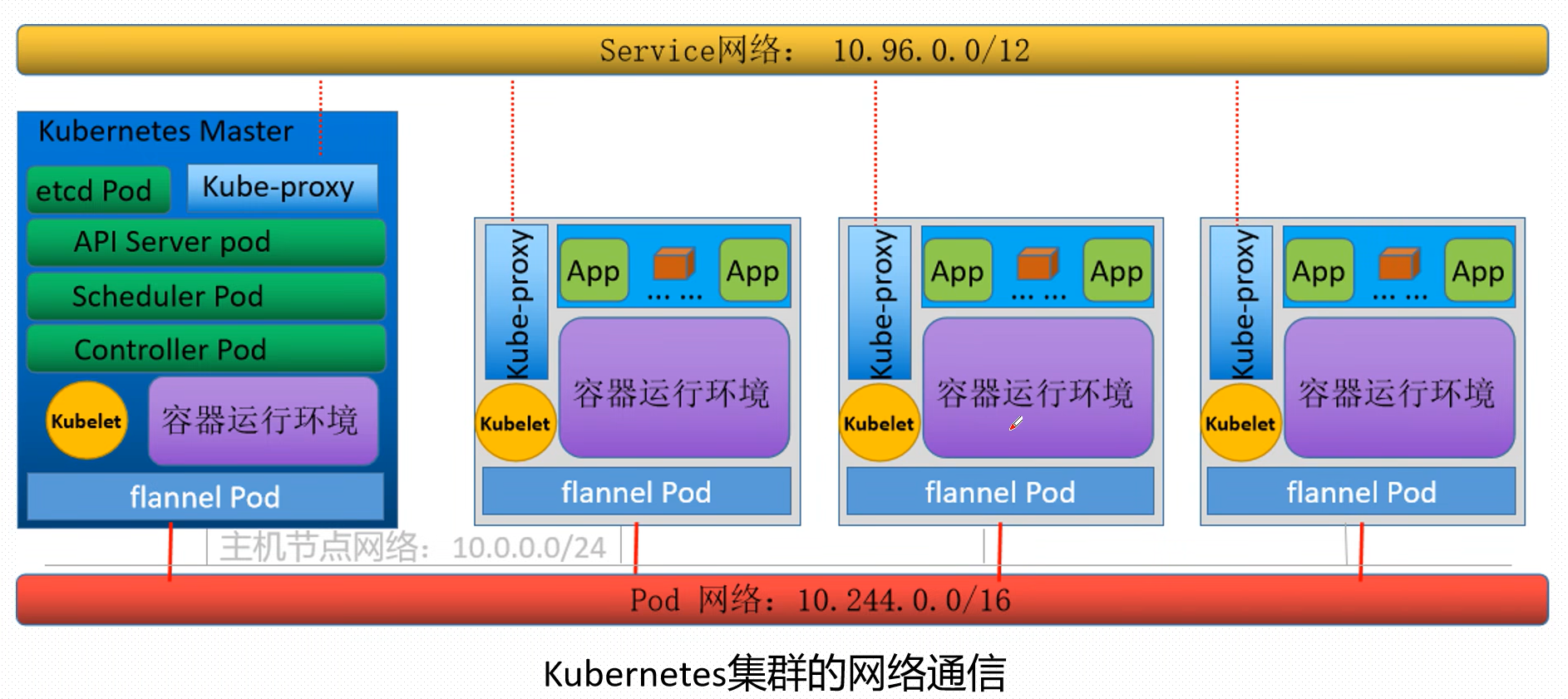

kubernetes集群的网络通讯

2、Node 组件

节点组件在每个节点上运行,维护运行的 Pod 并提供 Kubernetes 运行环境。

kubelet

一个在集群中每个节点(node)上运行的代理。 它保证容器(containers)都 运行在 Pod 中。

kubelet 接收一组通过各类机制提供给它的 PodSpecs,确保这些 PodSpecs 中描述的容器处于运行状态且健康。 kubelet 不会管理不是由 Kubernetes 创建的容器。

kube-proxy 是集群中每个节点上运行的网络代理, 实现 Kubernetes 服务(Service) 概念的一部分。

kube-proxy 维护节点上的网络规则。这些网络规则允许从集群内部或外部的网络会话与 Pod 进行网络通信。

如果操作系统提供了数据包过滤层并可用的话,kube-proxy 会通过它来实现网络规则。否则, kube-proxy 仅转发流量本身。

3、Kubernetes 1.24.1环境部署

一、Containerd方式

(所有节点都要安装)

先安装containerd

前提配置docker源

# yum install -y containerd.io.x86_64

备份原配置文件

# mv /etc/containerd/config.toml{,.bak}

生成默认配置文件

# containerd config default > /etc/containerd/config.toml

修改配置文件

配置镜像加速

# vim /etc/containerd/config.toml

config_path = "/etc/containerd/registry" 为要修改的部分 路径可以自定义

[plugins."io.containerd.grpc.v1.cri".registry]

config_path = "/etc/containerd/registry"

[plugins."io.containerd.grpc.v1.cri".registry.auths]

[plugins."io.containerd.grpc.v1.cri".registry.configs]

[plugins."io.containerd.grpc.v1.cri".registry.headers]

[plugins."io.containerd.grpc.v1.cri".registry.mirrors]

[plugins."io.containerd.grpc.v1.cri".x509_key_pair_streaming]

创建文件夹

中包含

docker.io一个代表要配置的主机命名空间的目录。然后在其中添加一个hosts.toml文件docker.io来配置主机命名空间

# mkdir /etc/containerd/registry/docker.io -p

创建hosts.toml文件

# vim /etc/containerd/registry/docker.io/hosts.toml

https://e0u0eb6u.mirror.aliyuncs.com将其修改成相应的加速地址

server = "https://docker.io"

[host."https://e0u0eb6u.mirror.aliyuncs.com"]

capabilities = ["pull", "resolve"]

添加运行时相关配置

# vim /etc/crictl.yaml

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

将配置文件拷贝到其他主机,避免重复配置

172.18.54.21更改成其他主机相应IP地址

# scp /etc/containerd/config.toml root@10.0.54.20:/etc/containerd/config.toml

# cp /etc/crictl.yaml root@10.0.54.20:/etc/crictl.yaml

重启containerd

# systemctl restart containerd

安装kubelet、kubeadm、kubectl

#所有节点都要安装

# yum install -y kubeadm-1.24.1 kubelet-1.24.1 kubectl-1.24.1 -y

#启动并开机自启

# systemctl start kubelet

# systemctl enable kubelet

初始化节点

[root@master ~]# kubeadm init --apiserver-advertise-address=172.18.54.10 --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers --kubernetes-version=1.24.1 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --cri-socket=unix:///run/containerd/containerd.sock

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

加入节点

[root@node1 ~]# kubeadm join 172.18.54.10:6443 --token vejfj1.jw8an4yxpbyp9rux --discovery-token-ca-cert-hash sha256:43a03ff3dda5fda334aed2e9faa827f59 47a9e5cbc8f0ceb0bbe71b117045d44 --cri-socket=unix:///run/containerd/containerd.sock

二、Docker方式

默认就是docker方式

三、CRI-O

4、kubeadm创建集群

请参照以前Docker安装。先提前为所有机器安装Docker,kubernetes的1.24.0版本后不支持docker了

1、安装kubeadm

-

一台兼容的 Linux 主机。Kubernetes 项目为基于 Debian 和 Red Hat 的 Linux 发行版以及一些不提供包管理器的发行版提供通用的指令

-

每台机器 2 GB 或更多的 RAM (如果少于这个数字将会影响你应用的运行内存)

-

2 CPU 核或更多

-

集群中的所有机器的网络彼此均能相互连接(公网和内网都可以)

-

- 设置防火墙放行规则

-

节点之中不可以有重复的主机名、MAC 地址或 product_uuid。请参见这里了解更多详细信息。

-

- 设置不同hostname

-

开启机器上的某些端口。请参见这里 了解更多详细信息。

-

- 内网互信

-

禁用交换分区。为了保证 kubelet 正常工作,你 必须 禁用交换分区。

-

- 永久关闭

1、基础环境

安装前必须先部署containerd、cri-o或者docker其中一种环境

所有机器执行以下操作

#各个机器设置自己的域名

hostnamectl set-hostname xxxx

# 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

#关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

#允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system

2、安装kubelet、kubeadm、kubectl

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

exclude=kubelet kubeadm kubectl

EOF

sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9 --disableexcludes=kubernetes

sudo systemctl enable --now kubelet

kubelet 现在每隔几秒就会重启,因为它陷入了一个等待 kubeadm 指令的死循环

2、使用kubeadm引导集群

1、下载各个机器需要的镜像

sudo tee ./images.sh <<-'EOF'

#!/bin/bash

images=(

kube-apiserver:v1.20.9

kube-proxy:v1.20.9

kube-controller-manager:v1.20.9

kube-scheduler:v1.20.9

coredns:1.7.0

etcd:3.4.13-0

pause:3.2

)

for imageName in ${images[@]} ; do

docker pull registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/$imageName

done

EOF

chmod +x ./images.sh && ./images.sh

#检查镜像是否下载完成

[root@master yum.repos.d]# docker images

2、初始化主节点

#所有机器添加master域名映射,以下需要修改为自己的

echo "172.18.54.10 master" >> /etc/hosts

echo "172.18.54.21 node1" >> /etc/hosts

echo "172.18.54.22 node2" >> /etc/hosts

#主节点初始化

kubeadm init \

--apiserver-advertise-address=173.54.54.101 \

--control-plane-endpoint=cluster-endpoint \

--image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \

--kubernetes-version v1.20.9 \

--service-cidr=10.96.0.0/16 \

--pod-network-cidr=192.168.0.0/16

#所有网络范围不重叠

如报下面错误

[init] Using Kubernetes version: v1.20.9

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.7. Latest validated version: 19.03

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables does not exist

[ERROR FileContent--proc-sys-net-ipv4-ip_forward]: /proc/sys/net/ipv4/ip_forward contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

解决方法

modprobe br_netfilter

[root@master ~]# echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

[root@cluster-endpoint ~]# echo 1 > /proc/sys/net/ipv4/ip_forward

已学kubernetes命令

#查看集群所有节点

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady control-plane,master 15m v1.20.9

#根据配置文件,给集群创建资源

kubectl apply -f xxxx.yaml

#查看集群部署了哪些应用?

docker ps === kubectl get pods -A

# 运行中的应用在docker里面叫容器,在k8s里面叫Pod

kubectl get pods -A

#删除pod

kubectl delete -f ×××.yaml #相关的yaml文件

#查看pod详细信息

[root@master ~]# kubectl describe po -n kubernetes-dashboard kubernetes-dashboard-7b5d774449-m4gr5

#查看pod日志

[root@master ~]# kubectl logs -f -n kubernetes-dashboard kubernetes-dashboard-7b5d774449-m4gr5

3、根据提示继续

master成功后提示如下:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join cluster-endpoint:6443 --token 501e2b.hxqz026pl6dg9pvg \

--discovery-token-ca-cert-hash sha256:d2bdd6718a10615986d6bc3f82b44ee7e1a18a58e480964d82f7336995011b30 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join cluster-endpoint:6443 --token 501e2b.hxqz026pl6dg9pvg \

--discovery-token-ca-cert-hash sha256:d2bdd6718a10615986d6bc3f82b44ee7e1a18a58e480964d82f7336995011b30

1、设置.kube/config

不设置会报如下错误:

The connection to the server localhost:8080 was refused - did you specify the right host or port?

[root@master ~]# mkdir -p $HOME/.kube

[root@master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master ~]# sudo chown $(id -g) $HOME/.kube/config

2、安装网络组件

error: unable to recognize "calico.yaml": no matches for kind "PodDisruptionBudget" in version "policy/v1"

安装网络组件有如上报错请使用最新的yaml

[root@master ~]# curl https://docs.projectcalico.org/v3.10/manifests/calico.yaml -O

[root@master ~]# kubectl apply -f calico.yaml

4、加入node节点

kubeadm join cluster-endpoint:6443 --token 501e2b.hxqz026pl6dg9pvg --discovery-token-ca-cert-hash sha256:d2bdd6718a10615986d6bc3f82b44ee7e1a18a58e480964d82f7336995011b30

新令牌

kubeadm token create --print-join-command

高可用部署方式,也是在这一步的时候,使用添加主节点的命令即可

5、验证集群

-

验证集群节点状态

-

- kubectl get nodes

6、部署dashboard

1、部署

kubernetes官方提供的可视化界面

https://github.com/kubernetes/dashboard

[root@master ~]# curl https://raw.githubusercontent.com/kubernetes/dashboard/v2.5.1/aio/deploy/recommended.yaml -O

[root@master ~]# kubectl apply -f recommended.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.3.1

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

spec:

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.6

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

2、设置访问端口

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

type: ClusterIP 改为 type: NodePort

kubectl get svc -A |grep kubernetes-dashboard

## 找到端口,在安全组放行

访问: https://集群任意IP:端口 https://173.54.54.201:31067

3、创建访问账号

#创建访问账号,准备一个yaml文件; vi dash.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

kubectl apply -f dash.yaml

4、令牌访问

#获取访问令牌

kubectl -n kubernetes-dashboard get secret $(kubectl -n kubernetes-dashboard get sa/admin-user -o jsonpath="{.secrets[0].name}") -o go-template="{{.data.token | base64decode}}"

eyJhbGciOiJSUzI1NiIsImtpZCI6Im5yenRKa1ZUOGhaMGZfWngtSXl0bDhiZzRiRWR2TjVDXy1mbHI4OFN4ZlUifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXBmbGNtIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI3OTE1ZjAwOS1jYzE3LTQ1YzYtODkwMC0yMDA5MDkzNGMxMzciLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.kF3-KkKDhTI3laaaU3xUj4WL-GfIc0w9HGzlW0GC-kK95HvnXMcB-yf9__otcP6DAZqjQ2vlo4Ibhn3QBLOZS2YuAp2pxlCVxOqTre02ZIiOsVf1d7F7aLrBFdt3MHtws6M1zrSDG4RhXR_7ipv9fprb4C7AqBXOWuPWmdo3ubGpzOS3OFZjmSCIeFW_Wv5NW9wwdwwgI-zIWf3vXW4Oku9m_cBRstnp4zsXmmgXDhm2B-LlTQOi160XbmRaJ4XtrA0aScRzh3pfTfjI5520aGXoNYh-7t2MpRnbd7atr-9nvKLBMoRYjIR7E8amsJ0cnlsMchYyQ-fbIbpzwShDJg

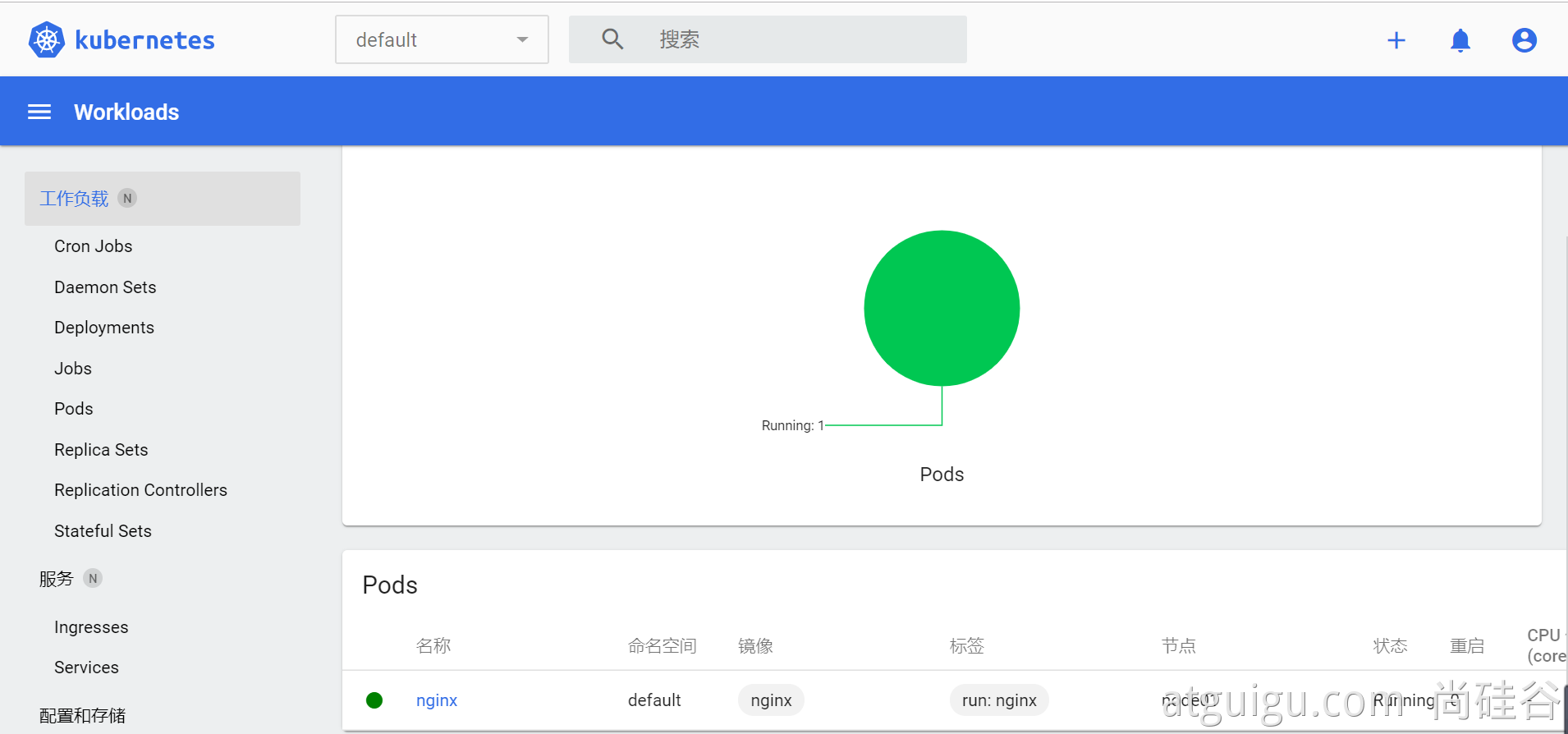

5、界面

ctr与crictl

crictl的配置使用

crictl 是 CRI 兼容的容器运行时命令行对接客户端, 你可以使用它来检查和调试 Kubernetes 节点上的容器运行时和应用程序。由于该命令是为k8s通过CRI使用containerd而开发的(主要是调试工具), 其他非k8s的创建的容器 crictl 是无法看到和调试的。

默认k8s安装时所有节点已经自带crictl。我们执行:

crictl images

IMAGE TAG IMAGE ID SIZE

docker.io/calico/cni v3.25.1 a0138614e6094 89.9MB

docker.io/calico/kube-controllers v3.25.1 212faac284a2e 31.9MB

crictl和kubelet使用的镜像的命名空间为k8s.io。

crictl镜像的namespace就一个,k8s.io。因此也是默认拉取镜像的namespace。

如果通过ctr拉取镜像时如果不指定放在k8s.io空间下,crictl是无法读取到本地的该镜像的。

ctr命令配置与使用

ctr是containerd自带的命令行工具。一共有三个命名空间default,k8s.io 和moby。默认default。

# ctr ns ls

NAME LABELS

k8s.io

下载镜像时指定命名空间

ctr -n k8s.io images pull docker.io/library/mysql:latest

当指定命名空间k8s.io时,下载完成后,k8s和crictl也可以获取到

自动补齐功能:

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

总结:

crictl作用于k8s集群,对其他容器不生效。主要用来调试k8s。

ctr时containerd自带工具,不走containerd的加速器配置。

nerdctl是conainerd子项目客户端,走加速器配置,镜像下载快。

Kubernetes核心实战

1、资源创建方式

- 命令行

- YAML

apiVersion: v1

kind: Service

metadata:

name: zabbix-web

labels:

app: zabbix-web

spec:

type: NodePort

ports:

- port: 8080

targetPort: 80

selector:

app: zabbix-web

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: zabbix-web

labels:

app: zabbix-web

spec:

selector:

matchLabels:

app: zabbix-web

replicas: 1

template:

metadata:

name: zabbix-web-pod

labels:

app: zabbix-web

spec:

containers:

- name: zabbix-web-pod

image: zabbix/zabbix-web-nginx-mysql:latest

ports:

- containerPort: 80

env:

- name: MYSQL_DATABASE

value: 'zabbix'

- name: DB_SERVER_HOST

value: '10.244.2.45'

- name: MYSQL_USER

value: 'zabbix'

- name: MYSQL_PASSWORD

value: 'zabbix'

2、Namespace

介绍

就是名字隔离 ip不隔离

资源对象的隔离:Service、Deployment、Pod

资源配额的隔离:Cpu、Memory

名称空间用来隔离资源

kubectl create ns hello #创建名称空间

kubectl delete ns hello #删除名称空间

通过yaml文件创建名称空间

[root@master ~]# vi hello.yaml

#名称空间配置文件

apiVersion: v1

kind: Namespace

metadata:

name: hello

配置当前的客户端默认访问指定namespace的资源

创建一个haojue命名空间

[root@master ~]# kubectl create ns haojue

备份原config

[root@master ~]# cp .kube/config{,.bak}

设置上下文

[root@master ~]# kubectl config set-context ctx-haojue \

--cluster=kubernetes \

--user=kubernetes-admin \

--namespace=haojue \

--kubeconfig=/root/.kube/config

设置默认上下文

[root@master ~]# kubectl config use-context ctx-haojue --kubeconfig=/root/.kube/config

查看效果

可以看到默认获取的就是haojue命名空间的资源

[root@master ~]# kubectl get pod

No resources found in haojue namespace.

Resources

LimitRange

限制pod的最大最小计算资源和比例以及限制container的默认计算资源以及限制计算资源和比例

如果创建了此资源,创建pod的时候没有指定容器的计算资源,会自动的为此pod添加limitRange设置的default计算资源

apiVersion: v1

kind: LimitRange

metadata:

name: test-limits

spec:

limits:

- max:

cpu: 4000m

memory: 2Gi

min:

cpu: 100m

memory: 100Mi

maxLimitRequestRatio:

cpu: 3

memory: 2

type: Pod

- default:

cpu: 300m

memory: 200Mi

defaultRequest:

cpu: 200m

memory: 100Mi

max:

cpu: 2000m

memory: 2Gi

min:

cpu: 100m

memory: 100Mi

maxLimitRequestRatio:

cpu: 5

memory: 4

type: Container

[root@master resource]# kubectl apply -f test-Limits.yaml -n test

limitrange/test-limits created

[root@master resource]# kubectl get LimitRange -n test

NAME CREATED AT

test-limits 2023-03-06T11:41:07Z

[root@master resource]# kubectl describe limitrange test-limits -n test

Name: test-limits

Namespace: haojue

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

Pod cpu 100m 4 - - 3

Pod memory 100Mi 2Gi - - 2

Container cpu 100m 2 200m 300m 5

Container memory 100Mi 2Gi 100Mi 200Mi 4

RequestsQuota

资源配额

限制此资源namespace下的kubernetes资源数量

apiVersion: v1

kind: ResourceQuota

metadata:

name: object-counts

spec:

hard:

pods: 4 #最多只能创建4个pod

requests.cpu: 2000m #requests.cpu最多指定2000m

requests.memory: 4Gi #requests.memory最多指定4Gi

limits.cpu: 4000m #limits.cpu最多指定4000m

limits.memory: 8Gi #limits.memory最多指定8Gi

configmaps: 10 #最多只能创建10个configmaps

persistentvolumeclaims: 4 #最多只能创建4个persistentvolumeclaims

replicationcontrollers: 20 #最多只能创建20个replicationcontrollers

secrets: 10 #最多只能创建10个secrets

services: 10 #最多只能创建10个services

#以此类推

[root@master resource]# kubectl apply -f object-counts.yaml -n test

resourcequota/object-counts created

[root@master resource]# kubectl get quota -n test

NAME AGE REQUEST LIMIT

object-counts 13s configmaps: 2/10, persistentvolumeclaims: 0/4, pods: 0/4, replicationcontrollers: 0/20, requests.cpu: 0/2, requests.memory: 0/4Gi, secrets: 1/10, services: 0/10 limits.cpu: 0/4, limits.memory: 0/8Gi

[root@master resource]# kubectl describe quota -n test object-counts

Name: object-counts

Namespace: test

Resource Used Hard

-------- ---- ----

configmaps 2 10

limits.cpu 0 4

limits.memory 0 8Gi

persistentvolumeclaims 0 4

pods 0 4

replicationcontrollers 0 20

requests.cpu 0 2

requests.memory 0 4Gi

secrets 1 10

services 0 10

[root@master resource]# kubectl apply -f pod-test.yaml -n test

pod/mynginx created

#可以看到描述上看到启动了一个pod

[root@master resource]# kubectl describe quota -n test object-counts

Name: object-counts

Namespace: test

Resource Used Hard

-------- ---- ----

configmaps 2 10

limits.cpu 100m 4

limits.memory 100000000 8Gi

persistentvolumeclaims 0 4

pods 1 4

replicationcontrollers 0 20

requests.cpu 100m 2

requests.memory 100000000 4Gi

secrets 1 10

services 0 10

pod-test.yaml

apiVersion: v1

kind: Pod

metadata:

labels:

run: mynginx

name: mynginx

spec:

containers:

- image: nginx

name: mynginx

resources:

requests:

memory: 100M

cpu: 100m

limits:

memory: 100M

cpu: 100m

Pod驱逐-Eviction

常见驱逐策略配置

--eviction-soft=memory.available<1.5Gi

--eviction-soft-grace-period=memory.available=1m30s

--eviction-hard=memory.available<100Mi,nodefs.available<1Gi,nodefs.inodesFree<5%

磁盘紧缺

- 删除撕掉的Pod、容器

- 删除没用的镜像

- 按优先级、资源占用情况驱逐Pod

内存紧缺

- 驱逐不可靠的Pod

- 驱逐基本可靠的Pod

- 驱逐可靠的Pod

Label

deployment中

spec: selector: matchLabels: app: nginx匹配的是template:中定义的labels,如果匹配不到则会报错

service中

spec: selector: app: my-dep匹配的是pod的labels

label还可以应用让pod指定在某个node上运行

spec:

template:

spec:

nodeSelector:

disktype: ssd

如上就是让pod指定选择带有disktype=ssd标签的node上运行

Pod调度

affinity亲和性

affinity

requiredDuringSchedulingIgnoredDuringExecution是必须要满足的条件的节点,否则会一直会处于Pending状态,如果定义多个matchExpressions,他们的关系是并列的

preferredDuringSchedulingIgnoredDuringExecution是优先满足条件的节点,不是必须要满足该条件,是否满足都会部署 weight为权重,可以定义多个条件,权重越高优先级越高

nodeAffinity-节点亲和性

节点亲和性,用于pod选择node进入部署,可以作用于

让某个pod部署到包含或者不包含某个label的node节点下

kind: Pod

apiVersion: v1

metadata:

name: nginx

labels:

app: nginx

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- operator: In

key: 'beta.kubernetes.io/arch'

values:

- amd64

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

preference:

matchExpressions:

- operator: NotIn

key: 'disktype'

values:

- ssd

containers:

- name: nginx

image: nginx

podAffinity-Pod亲和性

Pod亲和性,一个Pod与其他Pod的亲和性,可以作用于

某个Pod想跟某个Pod运行在一起,获取不想跟某个Pod运行在一起

kind: Pod

apiVersion: v1

metadata:

name: nginx

labels:

app: nginx

spec:

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelectorTerms:

matchExpressions:

- operator: In

key: 'app'

values:

- web-demo

topologyKey: kubernetes.io/hostname #匹配具有kubernetes.io/hostname key的node上(也就是所有node上匹配上面的条件)

#可以理解为此Pod运行在拥有labels有app=web-demo的pod的node上

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

preference:

matchExpressions:

- operator: In

key: 'app'

values:

- web-demo-node

containers:

- name: nginx

image: nginx

taint污点

创建污点

污点有三种:

一、NoScheduler 不会把Pod调度在node节点上,不影响节点上原有的pod

二、PreferNoSchedule 最好不要吧Pod调度在node节点上,不影响原有节点上的pod。但是如果没有其他节点可以调度,pod任然会调度到此节点上

三、NoExecute 驱逐,新创建的pod不会被调度到该节点上,同时节点上原有的pod会被驱逐

[root@master ~]# kubectl taint nodes node01 gpu=true:NoSchedule

查看已设置的污点

[root@master ~]# kubectl describe node node01

在输出的信息中查看 Taints 信息

删除污点

去除指定 key 下的指定 effect 类型的污点

[root@master ~]# kubectl taint nodes node01 gpu=true:NoSchedule-

去除指定 key 下的所有污点

[root@master ~]# kubectl taint nodes node01 gpu-

污点容忍

tolerations

kind: Pod

apiVersion: v1

metadata:

name: nginx

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

tolerations:

- key: gpu

operator: "Equal"

value: "true"

effect: "NoSchedule"

#相应的值必须和打污点的时候一样

部署策略

rolling update

服务不会间断,默认deployment就是使用的

rolling update

apiVersion: apps/1

kind: Deployment

metadata:

name: web-recreate

spec:

strategy:

rollingUpdate:

maxSurge: 25% #超出所有实例的百分比,比如说有4个pod,就是只能超出1个 也可以直接设置为数值比如1

maxUnavailable: 25% #最大的不可用的实例百分比

type: RollingUpdate

可以使用kubectl命令暂停或者恢复滚动更新

web-rollingupdate为deployment名称

暂停

kubectl rollout pause deploy web-rollingupdate

恢复

kubectl rollout resume deploy web-rollingupdate

回滚

kubectl rollout undo deploy web-rollingupdate

Recreate

服务会间断

apiVersion: apps/1

kind: Deployment

metadata:

name: web-recreate

spec:

strategy:

type: Recreate

蓝绿部署

web-blue.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: my-dep

name: web-blue

spec:

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

replicas: 2

selector:

matchLabels:

app: my-dep

template:

metadata:

labels:

app: my-dep

version: v1

spec:

containers:

- image: nginx

name: nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

selector:

app: my-dep

version: v1

ports:

- port: 8000

protocol: TCP

targetPort: 80

kubectl apply -f web-blue.yaml

上面比作为蓝版本,当蓝版本正常启动完成后,要更新绿版本,更改template中的labels:version=v2,并部署

web-green.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: my-dep

name: web-blue

spec:

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

replicas: 2

selector:

matchLabels:

app: my-dep

template:

metadata:

labels:

app: my-dep

version: v2

spec:

containers:

- image: nginx

name: nginx

kubectl apply -f web-green.yaml

当绿版本正常启动完成之后,修改service将选择标签version改成version: v2

web-svc.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

selector:

app: my-dep

version: v2

ports:

- port: 8000

protocol: TCP

targetPort: 80

kubectl apply -f web-svc.yaml

上面的部署方式就是蓝绿部署,此更新方式不会中断服务,且旧版本的pod可以一直保留,可以保留到再新一版本出来再迭代替换掉,保证了服务的安全性

金丝雀

金丝雀部署就是两个版本都运行,比如部署的是web服务,那么客户端就会交替的访问两个版本,和蓝绿部署差不多,但是金丝雀是让两个版本都上线

web-canary.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: my-dep

name: web-blue

spec:

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

replicas: 2

selector:

matchLabels:

app: my-dep

template:

metadata:

labels:

app: my-dep

version: v1

spec:

containers:

- image: nginx

name: nginx

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: my-dep

name: web-blue

spec:

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

replicas: 2

selector:

matchLabels:

app: my-dep

template:

metadata:

labels:

app: my-dep

version: v2

spec:

containers:

- image: nginx

name: nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

selector:

app: my-dep

ports:

- port: 8000

protocol: TCP

targetPort: 80

kubectl apply -f web-canary.yaml

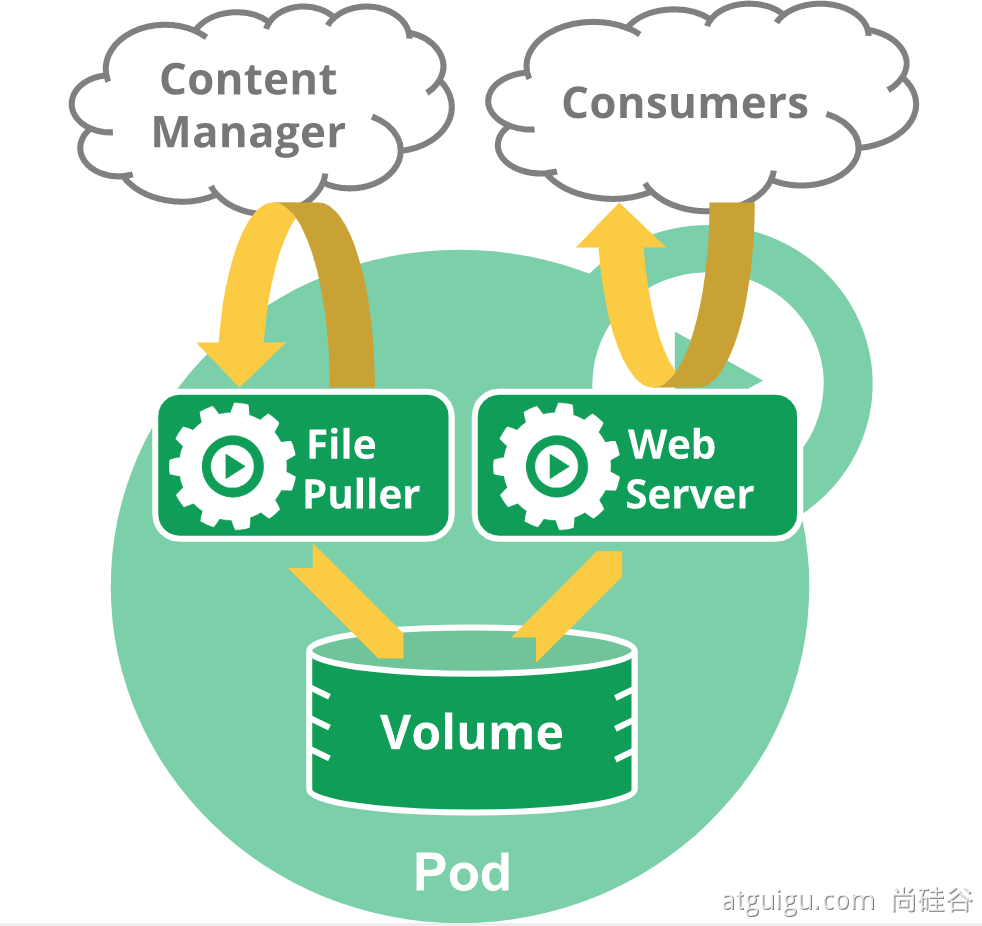

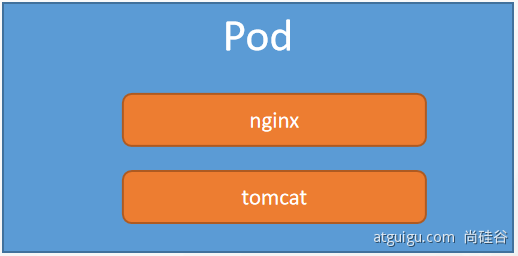

3、Pod

运行中的一组容器,Pod是kubernetes中应用的最小调度单位.

本质还是容器的隔离

Pod中如果有多个container,他们的网络是公用同一个的,挂载volume也是共享的

Pod的几种状态

- Pendding pod未被调度的状态

- containerCreating 创建容器的状态(初始化)

- Running 运行中的状态

- Succeeded 成功的状态

- Failed 失败的状态

- Ready Pod通过健康检测的状态

- CrashLoopBackOff Pod未通过健康检测的状态,一直处于启动失败的状态

- Unknown 未知的状态,一般是apiServer没有收到Pod的相关信息的汇报,可能kubelet与apiServer的通讯出现问题

Pod相关操作

kubectl run mynginx --image=nginx

# 查看mynginx名称空间的Pod

[root@master ~]# kubectl get pod -n mynginx

#查看pod的标签labels

[root@master ~]# kubectl get pod --show-labels

#查看标签labels为app=nginx的pod

[root@master ~]# kubectl get pod -l app=nginx

# kub描述

kubectl describe pod 你自己的Pod名字

# 删除

kubectl delete pod Pod名字

# 查看Pod的运行日志

kubectl logs Pod名字

#进入pod内部

kubectl exec -it mynginx-5b686ccd46-p9fd7 /bin/bash

# 每个Pod - k8s都会分配一个ip

kubectl get pod -owide

# 使用Pod的ip+pod里面运行容器的端口

curl 192.168.169.136

# 集群中的任意一个机器以及任意的应用都能通过Pod分配的ip来访问这个Pod

apiVersion: v1

kind: Pod

metadata:

labels:

run: mynginx

name: mynginx

# namespace: default#指定名称

spec:

containers:

- image: nginx

name: mynginx

apiVersion: v1

kind: Pod

metadata:

labels:

run: myapp

name: myapp

spec:

containers:

- image: nginx

name: nginx

- image: tomcat:8.5.68

name: tomcat

此时的应用还不能外部访问\

4、controller控制器

deployment -- 多副本管理 -- 维护Pod多副本状态

replicaset -- 多副本管理 -- 对pod直接管理

daemonset -- 一个node主机上运行pod只有一个副本

statefulset -- pod有状态 (名称不变、有序)

job -- pod 运行完成 退出即可

cronjob -- 周期性执行任务

Deployment

控制Pod,使Pod拥有多副本,自愈,扩缩容等能力

# 清除所有Pod,比较下面两个命令有何不同效果?

kubectl run mynginx --image=nginx

kubectl create deployment mytomcat --image=tomcat:8.5.68

#删除deployment

[root@master ~]# kubectl delete deployment mytomcat

# 自愈能力

语法及标签含义

#指定api版本标签

apiVersion: apps/v1

#定义资源的类型/角色,deployment为副本控制器

#此处资源类型可以是Deployment、Job、Ingress、Service等

kind: Deployment

#定义资源的元数据信息,比如资源的名称、namespace、标签等信息

metadata:

#定义资源的名称,在同一个namespace空间中必须是唯一的

name: nginx-deployment

labels:

app: nginx

#定义deployment资源需要的参数属性,诸如是否在容器失败时重新启动容器的属性

spec:

#定义副本数量

replicas: 3

#定义标签选择器

selector:

#定义匹配标签

matchLabels:

#需与后面的.spec.template.metadata.labels定义的标签保持一致

app: nginx

#定义业务模板,如果有多个副本,所有副本的属性会按照模板的相关配置进行匹配

template:

metadata:

#定义Pod副本将使用的标签,需与前面的.spec.selector.matchLabels定义的标签保持一致

labels:

app: nginx

spec:

#定义容器属性

containers:

#定义一个容器名,一个-name:定义一个容器

- name: nginx

#定义容器使用的镜像以及版本

image: nginx:1.15.4

ports:

#定义容器对外的端口

- containerPort: 80

1、多副本

kubectl create deployment my-dep --image=nginx --replicas=3

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: my-dep

name: my-dep

spec:

replicas: 3

selector:

matchLabels:

app: my-dep

template:

metadata:

labels:

app: my-dep

spec:

containers:

- image: nginx

name: nginx

2、扩缩容

kubectl scale deployment/my-dep --replicas=5

kubectl edit deployment my-dep

#修改 replicas

3、自愈&故障转移

- 停机

- 删除Pod

- 容器崩溃

- ....

4、滚动更新

kubectl set image deployment/my-dep nginx=nginx:1.16.1 --record

##查看资源状态

[root@master ~]# kubectl rollout status deployment/mynginx

修改pod

# 修改 kubectl edit deployment/my-dep

5、版本回退

#历史记录

[root@master ~]# kubectl rollout history deployment/mynginx

#查看某个历史详情

kubectl rollout history deployment/my-dep --revision=2

#回滚(回到上次)

kubectl rollout undo deployment/my-dep

#回滚(回到指定版本)

[root@master ~]# kubectl rollout undo deployment/mynginx --to-revision=1

更多:

除了Deployment,k8s还有 StatefulSet 、DaemonSet 、Job 等 类型资源。我们都称为 工作负载。

有状态应用使用 StatefulSet 部署,无状态应用使用 Deployment 部署

https://kubernetes.io/zh/docs/concepts/workloads/controllers/

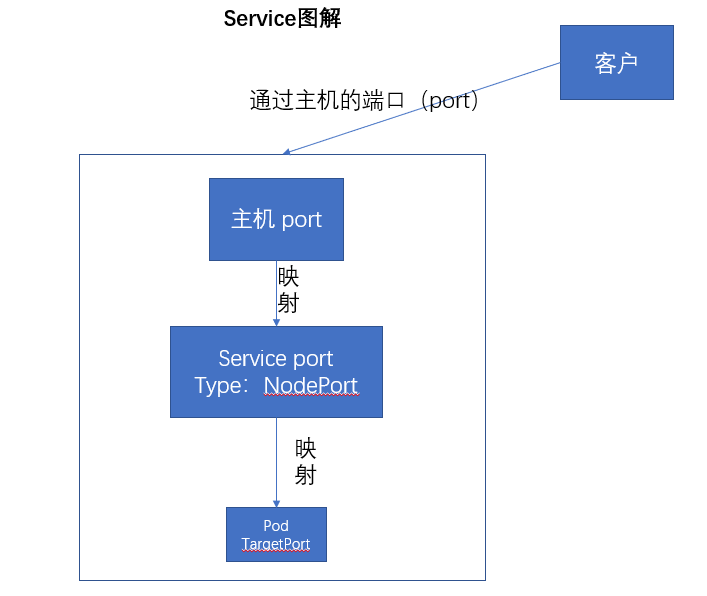

5、Service

将一组 Pods 公开为网络服务的抽象方法。

#暴露Deploy

kubectl expose deployment my-dep --port=8000 --target-port=80

#使用标签检索Pod

kubectl get pod -l app=my-dep

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

selector:

app: my-dep

ports:

- port: 8000

protocol: TCP

targetPort: 80

1、ClusterIP

# 等同于没有--type的

kubectl expose deployment my-dep --port=8000 --target-port=80 --type=ClusterIP

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

ports:

- port: 8000

protocol: TCP

targetPort: 80

selector:

app: my-dep

type: ClusterIP

2、NodePort

kubectl expose deployment my-dep --port=8000 --target-port=80 --type=NodePort

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

ports:

- port: 8000

protocol: TCP

targetPort: 80

selector:

app: my-dep

type: NodePort

NodePort范围在 30000-32767 之间

6、Ingress

1、安装

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.6.4/deploy/static/provider/cloud/deploy.yaml

# 检查安装的结果

kubectl get pod,svc -n ingress-nginx

#修改文件deployment将pod的网络为hostNetwork,并选择部署在存在node=ingress标签的节点上

spec:

nodeSelector:

node: ingress

hostNetwork: true

如果下载不到,用以下文件

apiVersion: v1

kind: Namespace

metadata:

labels:

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

name: ingress-nginx

---

apiVersion: v1

automountServiceAccountToken: true

kind: ServiceAccount

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx

namespace: ingress-nginx

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx

namespace: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- endpoints

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io

resources:

- ingressclasses

verbs:

- get

- list

- watch

- apiGroups:

- coordination.k8s.io

resourceNames:

- ingress-nginx-leader

resources:

- leases

verbs:

- get

- update

- apiGroups:

- coordination.k8s.io

resources:

- leases

verbs:

- create

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission

namespace: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- secrets

verbs:

- get

- create

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

- namespaces

verbs:

- list

- watch

- apiGroups:

- coordination.k8s.io

resources:

- leases

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io

resources:

- ingressclasses

verbs:

- get

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission

rules:

- apiGroups:

- admissionregistration.k8s.io

resources:

- validatingwebhookconfigurations

verbs:

- get

- update

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx

namespace: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission

namespace: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: ingress-nginx

---

apiVersion: v1

data:

allow-snippet-annotations: "true"

kind: ConfigMap

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-controller

namespace: ingress-nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

externalTrafficPolicy: Local

ipFamilies:

- IPv4

ipFamilyPolicy: SingleStack

ports:

- appProtocol: http

name: http

port: 80

protocol: TCP

targetPort: http

- appProtocol: https

name: https

port: 443

protocol: TCP

targetPort: https

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

type: LoadBalancer

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-controller-admission

namespace: ingress-nginx

spec:

ports:

- appProtocol: https

name: https-webhook

port: 443

targetPort: webhook

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

type: ClusterIP

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

minReadySeconds: 0

revisionHistoryLimit: 10

selector:

matchLabels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

spec:

nodeSelector:

node: ingress

hostNetwork: true

containers:

- args:

- /nginx-ingress-controller

- --publish-service=$(POD_NAMESPACE)/ingress-nginx-controller

- --election-id=ingress-nginx-leader

- --controller-class=k8s.io/ingress-nginx

- --ingress-class=nginx

- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller

- --validating-webhook=:8443

- --validating-webhook-certificate=/usr/local/certificates/cert

- --validating-webhook-key=/usr/local/certificates/key

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: LD_PRELOAD

value: /usr/local/lib/libmimalloc.so

image: registry.k8s.io/ingress-nginx/controller:v1.6.4@sha256:15be4666c53052484dd2992efacf2f50ea77a78ae8aa21ccd91af6baaa7ea22f

imagePullPolicy: IfNotPresent

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

livenessProbe:

failureThreshold: 5

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: controller

ports:

- containerPort: 80

name: http

protocol: TCP

- containerPort: 443

name: https

protocol: TCP

- containerPort: 8443

name: webhook

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 100m

memory: 90Mi

securityContext:

allowPrivilegeEscalation: true

capabilities:

add:

- NET_BIND_SERVICE

drop:

- ALL

runAsUser: 101

volumeMounts:

- mountPath: /usr/local/certificates/

name: webhook-cert

readOnly: true

dnsPolicy: ClusterFirst

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx

terminationGracePeriodSeconds: 300

volumes:

- name: webhook-cert

secret:

secretName: ingress-nginx-admission

---

apiVersion: batch/v1

kind: Job

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission-create

namespace: ingress-nginx

spec:

template:

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission-create

spec:

containers:

- args:

- create

- --host=ingress-nginx-controller-admission,ingress-nginx-controller-admission.$(POD_NAMESPACE).svc

- --namespace=$(POD_NAMESPACE)

- --secret-name=ingress-nginx-admission

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

image: registry.k8s.io/ingress-nginx/kube-webhook-certgen:v20220916-gd32f8c343@sha256:39c5b2e3310dc4264d638ad28d9d1d96c4cbb2b2dcfb52368fe4e3c63f61e10f

imagePullPolicy: IfNotPresent

name: create

securityContext:

allowPrivilegeEscalation: false

nodeSelector:

kubernetes.io/os: linux

restartPolicy: OnFailure

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 2000

serviceAccountName: ingress-nginx-admission

---

apiVersion: batch/v1

kind: Job

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission-patch

namespace: ingress-nginx

spec:

template:

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission-patch

spec:

containers:

- args:

- patch

- --webhook-name=ingress-nginx-admission

- --namespace=$(POD_NAMESPACE)

- --patch-mutating=false

- --secret-name=ingress-nginx-admission

- --patch-failure-policy=Fail

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

image: registry.k8s.io/ingress-nginx/kube-webhook-certgen:v20220916-gd32f8c343@sha256:39c5b2e3310dc4264d638ad28d9d1d96c4cbb2b2dcfb52368fe4e3c63f61e10f

imagePullPolicy: IfNotPresent

name: patch

securityContext:

allowPrivilegeEscalation: false

nodeSelector:

kubernetes.io/os: linux

restartPolicy: OnFailure

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 2000

serviceAccountName: ingress-nginx-admission

---

apiVersion: networking.k8s.io/v1

kind: IngressClass

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: nginx

spec:

controller: k8s.io/ingress-nginx

---

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

labels:

app.kubernetes.io/component: admission-webhook

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-admission

webhooks:

- admissionReviewVersions:

- v1

clientConfig:

service:

name: ingress-nginx-controller-admission

namespace: ingress-nginx

path: /networking/v1/ingresses

failurePolicy: Fail

matchPolicy: Equivalent

name: validate.nginx.ingress.kubernetes.io

rules:

- apiGroups:

- networking.k8s.io

apiVersions:

- v1

operations:

- CREATE

- UPDATE

resources:

- ingresses

sideEffects: None

2、使用

官网地址:https://kubernetes.github.io/ingress-nginx/

就是nginx做的

测试环境

应用如下yaml,准备好测试环境

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-server

spec:

replicas: 2

selector:

matchLabels:

app: hello-server

template:

metadata:

labels:

app: hello-server

spec:

containers:

- name: hello-server

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/hello-server

ports:

- containerPort: 9000

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-demo

name: nginx-demo

spec:

replicas: 2

selector:

matchLabels:

app: nginx-demo

template:

metadata:

labels:

app: nginx-demo

spec:

containers:

- image: nginx

name: nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx-demo

name: nginx-demo

spec:

selector:

app: nginx-demo

ports:

- port: 8000

protocol: TCP

targetPort: 80

---

apiVersion: v1

kind: Service

metadata:

labels:

app: hello-server

name: hello-server

spec:

selector:

app: hello-server

ports:

- port: 8000

protocol: TCP

targetPort: 9000

1、域名访问

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-host-bar

spec:

ingressClassName: nginx

rules:

- host: "hello.haojuetrace.com"

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: hello-server

port:

number: 8000

- host: "nginx.haojuetrace.com"

http:

paths:

- pathType: Prefix

path: "/nginx" # 把请求会转给下面的服务,下面的服务一定要能处理这个路径,不能处理就是404

backend:

service:

name: nginx-demo ## java,比如使用路径重写,去掉前缀nginx

port:

number: 8000

问题: path: "/nginx" 与 path: "/" 为什么会有不同的效果?

2、路径重写

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2

name: ingress-host-bar

spec:

ingressClassName: nginx

rules:

- host: "hello.haojuetrace.com"

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: hello-server

port:

number: 8000

- host: "nginx.haojuetrace.com"

http:

paths:

- pathType: Prefix

path: "/nginx(/|$)(.*)" # 把请求会转给下面的服务,下面的服务一定要能处理这个路径,不能处理就是404

backend:

service:

name: nginx-demo ## java,比如使用路径重写,去掉前缀nginx

port:

number: 8000

3、流量限制

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-limit-rate

annotations:

nginx.ingress.kubernetes.io/limit-rps: "1"

spec:

ingressClassName: nginx

rules:

- host: "haha.atguigu.com"

http:

paths:

- pathType: Exact

path: "/"

backend:

service:

name: nginx-demo

port:

number: 8000

使用DaemonSet运行ingress-nginx-controller

使用daemonSet替换deployment,可以解决不用多次设置副本数,只要带有标签node=ingress的节点上就会启动一个副本

将部署时里的deployment拷贝下来,并修改相应配置

nginx-ingress-controller.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.6.4

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

minReadySeconds: 0

revisionHistoryLimit: 10

selector:

matchLabels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

spec:

nodeSelector:

node: ingress

hostNetwork: true

containers:

- args:

- /nginx-ingress-controller

- --publish-service=$(POD_NAMESPACE)/ingress-nginx-controller

- --election-id=ingress-nginx-leader

- --controller-class=k8s.io/ingress-nginx

- --ingress-class=nginx

- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller

- --validating-webhook=:8443

- --validating-webhook-certificate=/usr/local/certificates/cert

- --validating-webhook-key=/usr/local/certificates/key

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: LD_PRELOAD

value: /usr/local/lib/libmimalloc.so

image: registry.k8s.io/ingress-nginx/controller:v1.6.4@sha256:15be4666c53052484dd2992efacf2f50ea77a78ae8aa21ccd91af6baaa7ea22f

imagePullPolicy: IfNotPresent

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

livenessProbe:

failureThreshold: 5

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: controller

ports:

- containerPort: 80

name: http

protocol: TCP

- containerPort: 443

name: https

protocol: TCP

- containerPort: 8443

name: webhook

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 100m

memory: 90Mi

securityContext:

allowPrivilegeEscalation: true

capabilities:

add:

- NET_BIND_SERVICE

drop:

- ALL

runAsUser: 101

volumeMounts:

- mountPath: /usr/local/certificates/

name: webhook-cert

readOnly: true

dnsPolicy: ClusterFirst

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx

terminationGracePeriodSeconds: 300

volumes:

- name: webhook-cert

secret:

secretName: ingress-nginx-admission

#将原先的deployment删除

kubectl delete deploy -n ingress-nginx nginx-ingress-controller

#部署新的daemonSet

kubectl apply -f nginx-ingress-controller.yaml

#部署起来,如果有新的节点有标签为node=ingress时,自动在此节点上启动一个副本

四层代理

修改ingress-nginx自带的configmap

tcp-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: tcp-services

namespace: ingress-nginx

data:

"30000": dev/web-demo:80

#前映射到后

#30000就是要暴露出去的端口

#dev/web-demo:80 在dev命名空间下的web-demo的service的80端口

7、存储抽象

环境准备

1、所有节点

#所有机器安装

yum install -y nfs-utils

2、主节点

#nfs主节点

echo "/nfs/data/ *(insecure,rw,sync,no_root_squash)" > /etc/exports

mkdir -p /nfs/data

systemctl enable rpcbind --now

systemctl enable nfs-server --now

#配置生效

exportfs -r

3、从节点

showmount -e 173.54.54.101

#执行以下命令挂载 nfs 服务器上的共享目录到本机路径 /root/nfsmount

mkdir -p /nfs/data

mount -t nfs 173.54.54.101:/nfs/data /nfs/data

# 写入一个测试文件

echo "hello nfs server" > /nfs/data/test.txt

4、原生方式数据挂载

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-pv-demo

name: nginx-pv-demo

spec:

replicas: 2

selector:

matchLabels:

app: nginx-pv-demo

template:

metadata:

labels:

app: nginx-pv-demo

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- name: html

mountPath: /usr/share/nginx/html

volumes:

- name: html

nfs:

server: 173.54.54.101

path: /nfs/data/nginx-pv

1、PV&PVC

PV:持久卷(Persistent Volume),将应用需要持久化的数据保存到指定位置

PVC:持久卷申明(Persistent Volume Claim),申明需要使用的持久卷规格

1、创建pv池

静态供应

#nfs主节点

mkdir -p /nfs/data/01

mkdir -p /nfs/data/02

mkdir -p /nfs/data/03

创建PV

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv01-10m

spec:

capacity:

storage: 10M

accessModes:

- ReadWriteMany

storageClassName: nfs

nfs:

path: /nfs/data/01

server: 173.54.54.101

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv02-1gi

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

storageClassName: nfs

nfs:

path: /nfs/data/02

server: 173.54.54.101

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv03-3gi

spec:

capacity:

storage: 3Gi

accessModes:

- ReadWriteMany

storageClassName: nfs

nfs:

path: /nfs/data/03

server: 173.54.54.101

2、PVC创建与绑定

创建PVC

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nginx-pvc

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 200Mi

storageClassName: nfs

创建Pod绑定PVC

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-deploy-pvc

name: nginx-deploy-pvc

spec:

replicas: 2

selector:

matchLabels:

app: nginx-deploy-pvc

template:

metadata:

labels:

app: nginx-deploy-pvc

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- name: html

mountPath: /usr/share/nginx/html

volumes:

- name: html

persistentVolumeClaim:

claimName: nginx-pvc

2、storageclass动态pv

这里使用的是nfs-client-provisioner

安装nfs

[root@master ~]# yum install -y nfs nfs-utils

[root@node ~]# yum install -y nfs-utils

配置nfs

#准备挂载文件夹

[root@master ~]# mkdir /mnt/storage

[root@master ~]# chmod -R 777 /mnt/storage

[root@master ~]# vim /etc/exports

/mnt/storage *(rw,sync,no_root_squash)

[root@master ~]# systemctl daemon-reload

[root@master ~]# systemctl restart nfs

[root@master ~]# showmount -e 172.18.54.10

Export list for 172.18.54.10:

/mnt/storage *{rw,sync,no_root_squash}

[root@master ~]# kubectl apply -f nfs-provisioner.yaml

[root@master ~]# kubectl apply -f storageclass.yaml

[root@master ~]# kubectl apply -f pvc.yaml

nfs-provisioner.yaml

piVersion: v1

kind: ServiceAccount

metadata:

name: nfs-provisioner

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nfs-provisioner

rules:

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get","list","watch","create","delete"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get","list","watch","create","delete"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get","list","watch","create","updata","delete"]

- apiGroups: [""]

resources: ["services", "endpoints","events"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: nfs-provisioner

roleRef:

apiGroup: "rbac.authorization.k8s.io"

kind: ClusterRole

name: nfs-provisioner

subjects:

- kind: ServiceAccount

name: nfs-provisioner

namespace: default

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-provisioner

labels:

app: nfs-provisioner

spec:

selector:

matchLabels:

app: nfs-provisioner

replicas: 1

template:

metadata:

name: nfs-provisioner-pod

labels:

app: nfs-provisioner

spec:

serviceAccount: nfs-provisioner

containers:

- name: nfs-provisioner-pod

image: 172.18.54.30/library/nfs-client-provisioner:latest

env:

- name: PROVISIONER_NAME

value: haojue

- name: NFS_SERVER

value: 172.18.54.10

- name: NFS_PATH

value: /mnt/storage

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

volumes:

- name: nfs-client-root

nfs:

path: /mnt/storage

server: 172.18.54.10

storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-sc

provisioner: haojue

pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: 5gi

sepc:

storageClassName: nfs-sc

accessModes:

- ReadWriterMany

resources:

requests:

storage: 5Gi

3、ConfigMap

抽取应用配置,并且可以自动更新

1、redis示例

1、把之前的配置文件创建为配置集

# 创建配置,redis保存到k8s的etcd;默认以文件文为data的key

kubectl create cm redis-conf --from-file=redis.conf

apiVersion: v1

data: #data是所有真正的数据,key:默认是文件名 value:配置文件的内容

redis.conf: |

appendonly yes

kind: ConfigMap

metadata:

name: redis-conf

namespace: default

2、创建Pod

apiVersion: v1

kind: Pod

metadata:

name: redis

spec:

containers:

- name: redis

image: redis

command:

- redis-server

- "/redis-master/redis.conf" #指的是redis容器内部的位置

ports:

- containerPort: 6379

volumeMounts:

- mountPath: /data

name: data

- mountPath: /redis-master

name: config

volumes:

- name: data

emptyDir: {}

- name: config

configMap:

name: redis-conf

items:

- key: redis.conf

path: redis.conf

3、检查默认配置

kubectl exec -it redis -- redis-cli

127.0.0.1:6379> CONFIG GET appendonly

127.0.0.1:6379> CONFIG GET requirepass

4、修改ConfigMap

apiVersion: v1

kind: ConfigMap

metadata:

name: example-redis-config

data:

redis-config: |

maxmemory 2mb

maxmemory-policy allkeys-lru

5、检查配置是否更新

kubectl exec -it redis -- redis-cli

127.0.0.1:6379> CONFIG GET maxmemory

127.0.0.1:6379> CONFIG GET maxmemory-policy

检查指定文件内容是否已经更新

修改了CM。Pod里面的配置文件会跟着变

配置值未更改,因为需要重新启动 Pod 才能从关联的 ConfigMap 中获取更新的值。

原因:我们的Pod部署的中间件自己本身没有热更新能力

# cat mysql-deployment.yaml

apiVersion: v1 #定义api版本为v1

kind: Service #定义资源类型为service

metadata: # 定义资源的元数据

name: wordpress-mysql #定义资源名称

labels: #定义标签

app: wordpress

spec: #定义service资源需要的参数

ports: #定义容器对外的端口

- port: 3306

selector: #定义标签选择器

app: wordpress

tier: mysql #分层

clusterIP: None

---

apiVersion: v1

kind: PersistentVolumeClaim #定义资源类型为pvc

metadata:

name: mysql-pv-claim

labels:

app: wordpress

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 20Gi

---

apiVersion: apps/v1 # for versions before 1.9.0 use apps/v1beta2

kind: Deployment

metadata:

name: wordpress-mysql

labels:

app: wordpress

spec:

selector:

matchLabels:

app: wordpress

tier: mysql

strategy: #定义升级策略

type: Recreate #Recreate完全删除重建

template: #定义业务模板

metadata:

labels:

app: wordpress

tier: mysql

spec:

containers:

- image: 10.18.4.10/library/mysql:5.6

name: mysql

env:

- name: MYSQL_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mysql-pass

key: password

ports:

- containerPort: 3306

name: mysql

volumeMounts:

- name: mysql-persistent-storage

mountPath: /var/lib/mysql

volumes:

- name: mysql-persistent-storage

persistentVolumeClaim:

claimName: mysql-pv-claim

4、Secret

Secret 对象类型用来保存敏感信息,例如密码、OAuth 令牌和 SSH 密钥。 将这些信息放在 secret 中比放在 Pod 的定义或者 容器镜像 中来说更加安全和灵活。

kubectl create secret docker-registry leifengyang-docker \

--docker-username=leifengyang \

--docker-password=Lfy123456 \

--docker-email=534096094@qq.com

##命令格式

kubectl create secret docker-registry regcred \

--docker-server=<你的镜像仓库服务器> \

--docker-username=<你的用户名> \

--docker-password=<你的密码> \

--docker-email=<你的邮箱地址>

apiVersion: v1

kind: Pod

metadata:

name: private-nginx

spec:

containers:

- name: private-nginx

image: leifengyang/guignginx:v1.0

imagePullSecrets:

- name: leifengyang-docker

yaml编写

echo -n "123456" | base64

secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: mysql-passwd

type: Opaque

data:

passwd: MTIzNDU2

downward API

将pod的信息传入pod内部

pod-downwardAPI.yaml

apiVersion: v1

kind: Pod

metadata:

name: nginx-downwardapi

spec:

containers:

- name: nginx

image: nginx

volumeMounts:

- mountPath: /etc/podinfo

name: podinfo

volumes:

- name: podinfo

downwardAPI:

items:

- path: "labels"

fieldRef:

fieldPath: metadata.labels

- path: "name"

fieldRef:

fieldPath: metadata.name

- path: "namespace"

fieldRef:

fieldPath: metadata.namespace

- path: "memory-request"

resourceFieldRef:

containerName: nginx

resource: limits.memory

[root@master ~]# kubectl apply -f pod-downwardAPI.yaml

[root@master ~]# kubectl exec -it nginx-downwardapi bash

root@nginx-downwardapi:/# cd /etc/podinfo/

root@nginx-downwardapi:/etc/podinfo# ls

labels memory-request name namespace

root@nginx-downwardapi:/etc/podinfo# cat labels

security.istio.io/tlsMode="istio"

service.istio.io/canonical-name="nginx-downwardapi"